Friday, October 10. 2014

More Than Meets the Eye: NASA Scientists Listen to Data

Via NASA

-----

Robert Alexander spends parts of his day listening to a soft white noise, similar to water falling on the outside of a house during a rainstorm. Every once in a while, he hears an anomalous sound and marks the corresponding time in the audio file. Alexander is listening to the sun’s magnetic field and marking potential areas of interest. After only ten minutes, he has listened to one month’s worth of data.

Alexander is a PhD candidate in design science at the University of Michigan. He is a sonification specialist who trains heliophysicists at NASA’s Goddard Space Flight Center in Greenbelt, Maryland, to pick out subtle differences by listening to satellite data instead of looking at it.

Sonification is the process of displaying any type of data or measurement as sound, such as the beep from a heart rate monitor measuring a person’s pulse, a door bell ringing every time a person enters a room, or, in this case, explosions indicating large events occurring on the sun. In certain cases, scientists can use their ears instead of their eyes to process data more rapidly -- and to detect more details – than through visual analysis. A paper on the effectiveness of sonification in analyzing data from NASA satellites was published in the July issue of Journal of Geophysical Research: Space Physics.

“NASA produces a vast amount of data from its satellites. Exploring such large quantities of data can be difficult,” said Alexander. "Sonification offers a promising supplement to standard visual analysis techniques.”

LISTENING TO SPACE

Alexander's focus is on improving and quantifying the success of these techniques. The team created audio clips from the data and shared them with researchers. While the original data from the Wind satellite was not in audio file format, the satellite records electromagnetic fluctuations that can be converted directly to audio samples. Alexander and his team used custom written computer algorithms to convert those electromagnetic frequencies into sound. Listen to the following multimedia clips to hear the sounds of space.

This clip has three distinct sections: a warble noise leading up to a short knock at slightly higher frequency followed by a quieter segment containing broadband noise that is both rising and hissing. This clip gathered from NASA's Wind satellite on Nov. 20, 2007, contains a reverse shock. This type of event occurs when a fast stream of plasma – that is, the super hot, charged gas that fills space— is followed by a slower one, resulting in a shock wave that travels towards the sun.

This audio clip is the previous clip played backwards. Here, trained listeners will notice the reverse shock event played backwards sounds similar to forward shock event.

This clip contains audified data from the joint European Space Agency (ESA) and NASA Ulysses satellite gathered on October 26, 1995. The participant in Alexander's study was able to detect artificial noise produced from the instrument, which he did not notice in previous visual analysis. Here, the artificial noise can be heard as a drifting tone.

PROCESSING AN OVERWHELMING AMOUNT OF DATA

Alexander's focus is on using clips like these to quantify and improve sonification techniques in order to speed up access to the incredible amounts of data provided by space satellites. For example, he works with space scientist Robert Wicks at NASA Goddard to analyze the high-resolution observations of the sun. Wicks studies the constant stream of particles from our closest star, known as the solar wind – a wind that can cause space weather effects that interfere with human technology near Earth. The team uses data from NASA's Wind satellite. Launched in 1994, Wind orbits a point in between Earth and the sun, constantly observing the temperature, density, speed and the magnetic field of the solar wind as it rushes past.

Wicks analyzes changes in Wind's magnetic field data. Such data not only carries information about the solar wind, but understanding such changes better might help give a forewarning of problematic space weather that can affect satellites near Earth. The Wind satellite also provides an abundance of magnetometer data points, as the satellite measures the magnetic field 11 times per second. Such incredible amounts of information are beneficial -- but only if all the data can be analyzed.

“There is a very long, accurate time series of data, which gives a fantastic view of solar wind changes and what’s going on at small scales,” said Wicks. “There's a rich diversity of physical processes going on, but it is more data than I can easily look through.”

The traditional method of processing the data involves making an educated assertion about where a certain event in the solar wind -- such as subtle wave movements made by hot plasma -- might show up and then visually searching, which can be very time consuming. Instead, Alexander listens to sped up versions of the Wind data and compiles a list of noteworthy regions that scientists like Wicks can return to and further analyze, expediting the process.

In one example, Alexander’s team analyzed data points from the Wind satellite from November 2007, condensing three hours of real-time recording to a three second audio clip. To an untrained ear, the data sounds like a microphone recording on a windy day. When Alexander presented these sounds to a researcher, however, the researcher could identify a distinct chirping at the beginning of the audio clip followed by a percussive event, culminating in a loud boom.

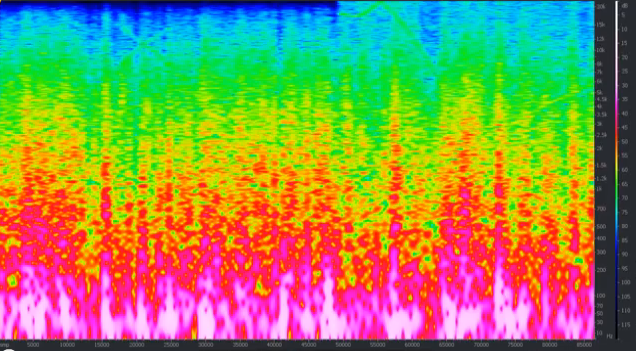

By listening only to the auditory representation of the data, the study’s participant was able to correctly predict what this would look like on a more traditional graph. He correctly deduced that that the chirp would show up as a particular kind of peak on a kind of graph called a spectrogram, a graph that shows different levels of frequencies present in the waves that Wind recorded. The researcher also correctly predicted that the corresponding spectrogram representation of the percussive event would display a steep slope.

CONVERTING DATA INTO SOUND

Alexander translates the data into audio files through a process known as audification, a specific type of sonification that involves directly listening to raw, unedited satellite data. Translating this data into audio can be likened to part of the process of collecting sound from a person singing into a microphone at a recording studio with reel-to-reel tape. When a person sings into a microphone, it detects changes in pressure and converts the pressure signals to changes in magnetic intensity in the form of an electrical signal. The electrical signals are stored on the reel tape. Magnetometers on the Wind satellite measure changes in magnetic field directly creating a similar kind of electrical signal. Alexander writes a computer program to translate this data to an audio file.

“The tones come out of the data naturally. If there is a frequency embedded in the data, then that frequency becomes audible as a sound,” said Alexander.

Listening to data is not new. In a study in 1982, researchers used audification to identify micrometeroids, or small ring particles, hitting the Voyager 2 spacecraft as it traversed Saturn's rings. The impacts were visually obscured in the data but could be easily heard – sounding like intense impulses, almost like a hailstorm.

However, the method is not often used in the science community because it requires a certain level of familiarity with the sounds. For instance, the listener needs to have an understanding of what typical solar wind turbulence sounds like in order to identify atypical events. “It’s about using your ear to pick out subtle differences,” Alexander said.

Alexander initially spent several months with Wicks teaching him how to listen to magnetometer data and highlighting certain elements. But the hard work is paying off as analysis gets faster and easier, leading to new assessments of the data.

“I’ve never listened to the data before,” said Wicks. “It has definitely opened up a different perspective.”

Kasha Patel

NASA’s Goddard Space Flight Center, Greenbelt, Md.

One in three jobs will be taken by software or robots by 2025

Via ComputerWorld

-----

Gartner sees things like robots and drones replacing a third of all workers by 2025, and whether you want to believe it or not, is entirely your business.

This is Gartner being provocative, as it typically is, at the start of its major U.S. conference, the Symposium/ITxpo.

Take drones, for instance.

"One day, a drone may be your eyes and ears," said Peter Sondergaard, Gartner's research director. In five years, drones will be a standard part of operations in many industries, used in agriculture, geographical surveys and oil and gas pipeline inspections.

"Drones are just one of many kinds of emerging technologies that extend well beyond the traditional information technology world -- these are smart machines," said Sondergaard.

Smart machines are an emerging "super class" of technologies that perform a wide variety of work, both the physical and the intellectual kind, said Sondergaard. Machines, for instance, have been grading multiple choice for years, but now they are grading essays and unstructured text.

This cognitive capability in software will extend to other areas, including financial analysis, medical diagnostics and data analytic jobs of all sorts, says Gartner.

"Knowledge work will be automated," said Sondergaard, as will physical jobs with the arrival of smart robots.

"Gartner predicts one in three jobs will be converted to software, robots and smart machines by 2025," said Sondergaard. "New digital businesses require less labor; machines will be make sense of data faster than humans can."

Among those listening in this audience was Lawrence Strohmaier, the CIO of Nuverra Environmental Solutions, who said Gartner's prediction is similar to what happened in other eras of technological advance.

"The shift is from doing to implementing, so the doers go away but someone still has to implement," said Strohmaier. IT is a shift, although a slow one, to new types of jobs, no different than what happened in the machine age, he said.

The forecast of the impact of technology on jobs was also a warning to the CIOs and IT managers at this conference to consider how they will adapt.

"The door is open for the CIO and the IT organization to be a major player in digital leadership," said David Aron, a Gartner analyst.

CIOs have been steadily gaining authority, and 41% of CIOs now report to the CEO, a record level, said Aron. That's based on data from 2,810 CIOs globally.

To be effective leaders, Gartner argues that CIOs have shifted from being focused on measuring things like cost to being able to lead with vision and describe what their business or government agency must do to take advantage of smarter technologies.

Thursday, October 02. 2014

A Dating Site for Algorithms

-----

A startup called Algorithmia has a new twist on online matchmaking. Its website is a place for businesses with piles of data to find researchers with a dreamboat algorithm that could extract insights–and profits–from it all.

The aim is to make better use of the many algorithms that are developed in academia but then languish after being published in research papers, says cofounder Diego Oppenheimer. Many have the potential to help companies sort through and make sense of the data they collect from customers or on the Web at large. If Algorithmia makes a fruitful match, a researcher is paid a fee for the algorithm’s use, and the matchmaker takes a small cut. The site is currently in a private beta test with users including academics, students, and some businesses, but Oppenheimer says it already has some paying customers and should open to more users in a public test by the end of the year.

“Algorithms solve a problem. So when you have a collection of algorithms, you essentially have a collection of problem-solving things,” says Oppenheimer, who previously worked on data-analysis features for the Excel team at Microsoft.

Oppenheimer and cofounder Kenny Daniel, a former graduate student at USC who studied artificial intelligence, began working on the site full time late last year. The company raised $2.4 million in seed funding earlier this month from Madrona Venture Group and others, including angel investor Oren Etzioni, the CEO of the Allen Institute for Artificial Intelligence and a computer science professor at the University of Washington.

Etzioni says that many good ideas are essentially wasted in papers presented at computer science conferences and in journals. “Most of them have an algorithm and software associated with them, and the problem is very few people will find them and almost nobody will use them,” he says.

One reason is that academic papers are written for other academics, so people from industry can’t easily discover their ideas, says Etzioni. Even if a company does find an idea it likes, it takes time and money to interpret the academic write-up and turn it into something testable.

To change this, Algorithmia requires algorithms submitted to its site to use a standardized application programming interface that makes them easier to use and compare. Oppenheimer says some of the algorithms currently looking for love could be used for machine learning, extracting meaning from text, and planning routes within things like maps and video games.

Early users of the site have found algorithms to do jobs such as extracting data from receipts so they can be automatically categorized. Over time the company expects around 10 percent of users to contribute their own algorithms. Developers can decide whether they want to offer their algorithms free or set a price.

All algorithms on Algorithmia’s platform are live, Oppenheimer says, so users can immediately use them, see results, and try out other algorithms at the same time.

The site lets users vote and comment on the utility of different algorithms and shows how many times each has been used. Algorithmia encourages developers to let others see the code behind their algorithms so they can spot errors or ways to improve on their efficiency.

One potential challenge is that it’s not always clear who owns the intellectual property for an algorithm developed by a professor or graduate student at a university. Oppenheimer says it varies from school to school, though he notes that several make theirs open source. Algorithmia itself takes no ownership stake in the algorithms posted on the site.

Eventually, Etzioni believes, Algorithmia can go further than just matching up buyers and sellers as its collection of algorithms grows. He envisions it leading to a new, faster way to compose software, in which developers join together many different algorithms from the selection on offer.

Quicksearch

Popular Entries

- The great Ars Android interface shootout (128961)

- MeCam $49 flying camera concept follows you around, streams video to your phone (97446)

- Norton cyber crime study offers striking revenue loss statistics (93472)

- The PC inside your phone: A guide to the system-on-a-chip (55329)

- Norton cyber crime study offers striking revenue loss statistics (49701)

Categories

Show tagged entries

Syndicate This Blog

Calendar

|

|

October '14 |

|

||||

| Mon | Tue | Wed | Thu | Fri | Sat | Sun |

| 1 | 2 | 3 | 4 | 5 | ||

| 6 | 7 | 8 | 9 | 10 | 11 | 12 |

| 13 | 14 | 15 | 16 | 17 | 18 | 19 |

| 20 | 21 | 22 | 23 | 24 | 25 | 26 |

| 27 | 28 | 29 | 30 | 31 | ||