Friday, June 29. 2012

Google's 16,000-brain neural network just wants to watch cat videos

Via DVICE

-----

The techno-wizards over at Google X, the company's R&D laboratory working on its self-driving cars and Project Glass, linked 16,000 processors together to form a neural network and then had it go forth and try to learn on its own. Turns out, massive digital networks are a lot like bored humans poking at iPads.

The pretty amazing takeaway here is that this 16,000-processor neural network, spread out over 1,000 linked computers, was not told to look for any one thing, but instead discovered that a pattern revolved around cat pictures on its own.

This happened after Google presented the network with image stills from 10 million random YouTube videos. The images were small thumbnails, and Google's network was sorting through them to try and learn something about them. What it found — and we have ourselves to blame for this — was that there were a hell of a lot of cat faces.

"We never told it during the training, 'This is a cat,'" Jeff Dean, a Google fellow working on the project, told the New York Times. "It basically invented the concept of a cat. We probably have other ones that are side views of cats."

The network itself does not know what a cat is like you and I do. (It wouldn't, for instance, feel embarrassed being caught watching something like this in the presence of other neural networks.) What it does realize, however, is that there is something that it can recognize as being the same thing, and if we gave it the word, it would very well refer to it as "cat."

So, what's the big deal? Your computer at home is more than powerful enough to sort images. Where Google's neural network differs is that it looked at these 10 million images, recognized a pattern of cat faces, and then grafted together the idea that it was looking at something specific and distinct. It had a digital thought.

Andrew Ng, a computer scientist at Stanford University who is co-leading the study with Dean, spoke to the benefit of something like a self-teaching neural network: "The idea is that instead of having teams of researchers trying to find out how to find edges, you instead throw a ton of data at the algorithm and you let the data speak and have the software automatically learn from the data." The size of the network is important, too, and the human brain is "a million times larger in terms of the number of neurons and synapses" than Google X's simulated mind, according to the researchers.

"It'd be fantastic if it turns out that all we need to do is take current algorithms and run them bigger," Ng added, "but my gut feeling is that we still don't quite have the right algorithm yet."

Wednesday, April 11. 2012

The history of supercomputers

Via Christian Babski

-----

Extreme Tech has just published an interesting article on the history of super-comput[er,ing] that worth a reading. It is a bit spec' oriented but still gives a good overview on super-computer [r]evolution.

Thursday, April 05. 2012

MIT Project Aims to Deliver Printable, Mass-Market Robots

Via Wired

-----

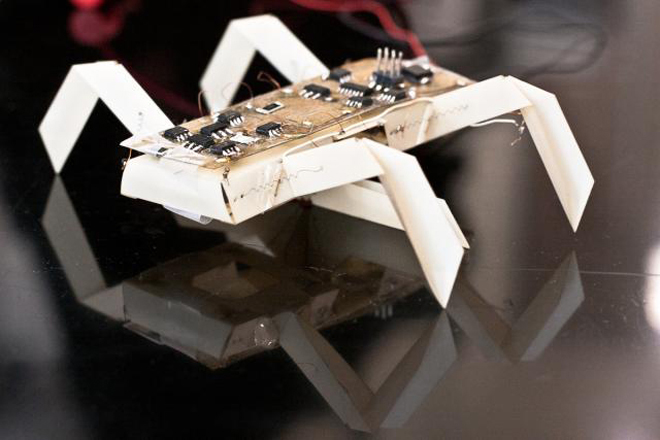

Insect printable robot. Photo: Jason Dorfman, CSAIL/MIT

Printers can make mugs, chocolate and even blood vessels. Now, MIT scientists want to add robo-assistants to the list of printable goodies.

Today, MIT announced a new project, “An Expedition in Computing Printable Programmable Machines,” that aims to give everyone a chance to have his or her own robot.

Need help peering into that unreasonably hard-to-reach cabinet, or wiping down your grimy 15th-story windows? Walk on over to robo-Kinko’s to print, and within 24 hours you could have a fully programmed working origami bot doing your dirty work.

“No system exists today that will take, as specification, your functional needs and will produce a machine capable of fulfilling that need,” MIT robotics engineer and project manager Daniela Rus said.

Unfortunately, the very earliest you’d be able to get your hands on an almost-instant robot might be 2017. The MIT scientists, along with collaborators at Harvard University and the University of Pennsylvania, received a $10 million grant from the National Science Foundation for the 5-year project. Right now, it’s at very early stages of development.

So far, the team has prototyped two mechanical helpers: an

insect-like robot and a gripper. The 6-legged tick-like printable robot

could be used to check your basement for gas leaks or to play with your

cat, Rus says. And the gripper claw, which picks up objects, might be

helpful in manufacturing, or for people with disabilities, she says.

The two prototypes cost about $100 and took about 70 minutes to build. The real cost to customers will depend on the robot’s specifications, its capabilities and the types of parts that are required for it to work.

The researchers want to create a one-size-fits-most platform to circumvent the high costs and special hardware and software often associated with robots. If their project works out, you could go to a local robo-printer, pick a design from a catalog and customize a robot according to your needs. Perhaps down the line you could even order-in your designer bot through an app.

Their approach to machine building could “democratize access to robots,” Rus said. She envisions producing devices that could detect toxic chemicals, aid science education in schools, and help around the house.

Although bringing robots to the masses sounds like a great idea (a sniffing bot to find lost socks would come in handy), there are still several potential roadblocks to consider — for example, how users, especially novice ones, will interact with the printable robots.

“Maybe this novice user will issue a command that will break the device, and we would like to develop programming environments that have the capability of catching these bad commands,” Rus said.

As it stands now, a robot would come pre-programmed to perform a set of tasks, but if a user wanted more advanced actions, he or she could build up those actions using the bot’s basic capabilities. That advanced set of commands could be programmed in a computer and beamed wirelessly to the robot. And as voice parsing systems get better, Rus thinks you might be able to simply tell your robot to do your bidding.

Durability is another issue. Would these robots be single-use only? If so, trekking to robo-Kinko’s every time you needed a bot to look behind the fridge might get old. These are all considerations the scientists will be grappling with in the lab. They’ll have at least five years to tease out some solutions.

In the meantime, it’s worth noting that other other groups are also building robots using printers. German engineers printed a white robotic spider last year. The arachnoid carried a camera and equipment to assess chemical spills.

And at Drexel University, paleontologist Kenneth Lacovara and mechanical engineer James Tangorra are trying to create a robotic dinosaur from dino-bone replicas. The 3-D-printed bones are scaled versions of laser-scanned fossils. By the end of 2012, Lacovara and Tangorra hope to have a fully mobile robotic dinosaur, which they want to use to study how dinosaurs, like large sauropods, moved.

Lancovara thinks the MIT project is an exciting and promising one: “If it’s a plug-and-play system, then it’s feasible,” he said. But “obviously, it [also] depends on the complexity of the robot.” He’s seen complex machines with working gears printed in one piece, he says.

Right now, the MIT researchers are developing an API that would facilitate custom robot design and writing algorithms for the assembly process and operations.

If their project works out, we could all have a bot to call our own in a few years. Who said print was dead?

Thursday, March 29. 2012

LG flexible epaper devices promised for April launch

Via Slash Gear

-----

LG Display has launched a new, 6-inch flexible epaper display that the company expects to show up in bendable products by the beginning of next month. The panel, a 1024 x 768 monochrome sheet, can be bent up to 40-degrees without breaking; in addition, because LG Display has used a flexible plastic substrate rather than the more traditional glass, it’s less than half the weight of a traditional epaper panel.

That means lighter gadgets that are actually more durable since the panels should be more resilient to drops or bumps. They can also be thinner, too: the plastic panel is a third slimmer than glass equivalents, at just 0.7mm thick.

LG Display says it can drop its new screen from 1.5m – the average height a device is held when it’s being used for reading, apparently – without any resulting damage. The company also hit the screen with a plastic hammer, leaving no scratches or breaks, ETNews reports.

LG isn’t the only company to be working on flexible screens this year. Samsung has already confirmed that it is looking at launching devices using flexible AMOLED panels in 2012, though it’s unclear whether the screens will actually fold or bend, or simply be used to wrap around smartphones for new types of UI.

The first products using the LG Display flexible panel are on track for a release in the European market in early April, the company claims. No word on what vendors will be offering them, nor how pricing will compare to traditional glass-substrate epaper.

Tuesday, March 27. 2012

TI Demos OMAP5 WiFi Display Mirroring on Development Platform

Via AnandTech

-----

On our last day at MWC 2012, TI pulled me aside for a private demonstration of WiFi Display functionality they had only just recently finalized working on their OMAP 5 development platform. The demo showed WiFi Display mirroring working between the development device’s 720p display and an adjacent notebook which was being used as the WiFi Display sink.

TI emphasized that what’s different about their WiFi Display implementation is that it works using the display framebuffer natively and not a memory copy which would introduce delay and take up space. In addition, the encoder being used is the IVA-HD accelerator doing the WiFi Display specification’s mandatory H.264 baseline Level 3.1 encode, not a software encoder running on the application processor. The demo was running mirroring the development tablet’s 720p display, but TI says they could easily do 1080p as well, but would require a 1080p framebuffer to snoop on the host device. Latency between the development platform and display sink was just 15ms - essentially one frame at 60 Hz.

The demonstration worked live over the air at TI’s MWC booth and also used a WiLink 8 series WLAN combo chip. There was some stuttering, however this is understandable given the fact that this demo was using TCP (live implementations will use UDP) and of course just how crowded 2.4 and 5 GHz spectrum is at these conferences. In addition, TI collaborated with Screenovate for their application development and WiFi Display optimization secret sauce, which I’m guessing has to do with adaptive bitrate or possibly more.

Enabling higher than 480p software encoded WiFi Display is just one more obvious piece of the puzzle which will eventually enable smartphones and tablets to obviate standalone streaming devices.

-----

Personal Comment:

Kind of obvious and interesting step forward as it is more and more requested by mobile devices users to be able to beam or 'to TV' mobile device's screens... which should lead to transform any (mobile) device in a full-duplex video broadcasting enabled device (user interaction included!) ... and one may then succeed in getting rid of some cables in the same sitting?!

Monday, March 26. 2012

New Samsung sensor captures image, depth simultaneously

Via electronista

-----

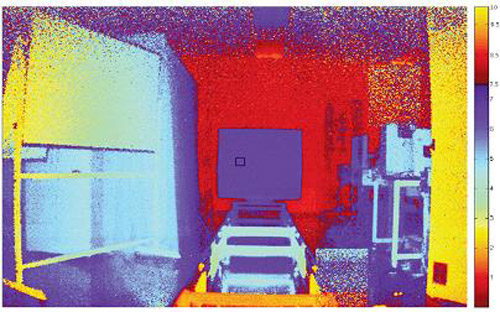

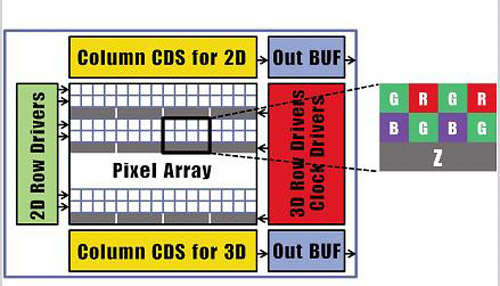

Samsung has developed a new camera sensor technology that offers the ability to simultaneously capture image and depth. The breakthrough could potentially be applied to smartphones and other devices as an alternative method of control where hand gestures could be used to carry out functions without having to touch a screen or other input. According to Tech-On, it uses a CMOS sensor with red, blue and green pixels, combined with an additional z-pixel for capturing depth.

The

new Samsung sensor can capture images at a resolution of 1,920x720

using its traditional RGB array, while it can also capture a depth image

at a resolution of 480x360 with the z-pixel. It is able to achieve its

depth capabilities by a special process whereby the z-pixel is located

beneath the RGB pixel array. Samsung’s boffins then placed a special

barrier between the RGB and z pixels allowing the light they capture to

give the effect that the z-pixel is three times its actual size.

In this early iteration of the new technology, Samsung used FSI

technology only. In future applications, BSI could be applied doubling

the quantum efficiency of the design further reducing cross-talk to the

RGB pixels.

-----

Personal Comment:

Some additional information on BSI (Backside illumination)/FSI (Frontside Illumination):

Monday, February 06. 2012

The Great Disk Drive in the Sky: How Web giants store big—and we mean big—data

Via Ars Technica

-----

Google technicians test hard drives at their data center in Moncks Corner, South Carolina -- Image courtesy of Google Datacenter Video

Consider the tech it takes to back the search box on Google's home page: behind the algorithms, the cached search terms, and the other features that spring to life as you type in a query sits a data store that essentially contains a full-text snapshot of most of the Web. While you and thousands of other people are simultaneously submitting searches, that snapshot is constantly being updated with a firehose of changes. At the same time, the data is being processed by thousands of individual server processes, each doing everything from figuring out which contextual ads you will be served to determining in what order to cough up search results.

The storage system backing Google's search engine has to be able to serve millions of data reads and writes daily from thousands of individual processes running on thousands of servers, can almost never be down for a backup or maintenance, and has to perpetually grow to accommodate the ever-expanding number of pages added by Google's Web-crawling robots. In total, Google processes over 20 petabytes of data per day.

That's not something that Google could pull off with an off-the-shelf storage architecture. And the same goes for other Web and cloud computing giants running hyper-scale data centers, such as Amazon and Facebook. While most data centers have addressed scaling up storage by adding more disk capacity on a storage area network, more storage servers, and often more database servers, these approaches fail to scale because of performance constraints in a cloud environment. In the cloud, there can be potentially thousands of active users of data at any moment, and the data being read and written at any given moment reaches into the thousands of terabytes.

The problem isn't simply an issue of disk read and write speeds. With data flows at these volumes, the main problem is storage network throughput; even with the best of switches and storage servers, traditional SAN architectures can become a performance bottleneck for data processing.

Then there's the cost of scaling up storage conventionally. Given the rate that hyper-scale web companies add capacity (Amazon, for example, adds as much capacity to its data centers each day as the whole company ran on in 2001, according to Amazon Vice President James Hamilton), the cost required to properly roll out needed storage in the same way most data centers do would be huge in terms of required management, hardware, and software costs. That cost goes up even higher when relational databases are added to the mix, depending on how an organization approaches segmenting and replicating them.

The need for this kind of perpetually scalable, durable storage has driven the giants of the Web—Google, Amazon, Facebook, Microsoft, and others—to adopt a different sort of storage solution: distributed file systems based on object-based storage. These systems were at least in part inspired by other distributed and clustered filesystems such as Red Hat's Global File System and IBM's General Parallel Filesystem.

The architecture of the cloud giants' distributed file systems separates the metadata (the data about the content) from the stored data itself. That allows for high volumes of parallel reading and writing of data across multiple replicas, and the tossing of concepts like "file locking" out the window.

The impact of these distributed file systems extends far beyond the walls of the hyper-scale data centers they were built for— they have a direct impact on how those who use public cloud services such as Amazon's EC2, Google's AppEngine, and Microsoft's Azure develop and deploy applications. And companies, universities, and government agencies looking for a way to rapidly store and provide access to huge volumes of data are increasingly turning to a whole new class of data storage systems inspired by the systems built by cloud giants. So it's worth understanding the history of their development, and the engineering compromises that were made in the process.

Google File System

Google was among the first of the major Web players to face the storage scalability problem head-on. And the answer arrived at by Google's engineers in 2003 was to build a distributed file system custom-fit to Google's data center strategy—Google File System (GFS).

GFS is the basis for nearly all of the company's cloud services. It handles data storage, including the company's BigTable database and the data store for Google's AppEngine platform-as-a-service, and it provides the data feed for Google's search engine and other applications. The design decisions Google made in creating GFS have driven much of the software engineering behind its cloud architecture, and vice-versa. Google tends to store data for applications in enormous files, and it uses files as "producer-consumer queues," where hundreds of machines collecting data may all be writing to the same file. That file might be processed by another application that merges or analyzes the data—perhaps even while the data is still being written.

Google keeps most technical details of GFS to itself, for obvious reasons. But as described by Google research fellow Sanjay Ghemawat, principal engineer Howard Gobioff, and senior staff engineer Shun-Tak Leung in a paper first published in 2003, GFS was designed with some very specific priorities in mind: Google wanted to turn large numbers of cheap servers and hard drives into a reliable data store for hundreds of terabytes of data that could manage itself around failures and errors. And it needed to be designed for Google's way of gathering and reading data, allowing multiple applications to append data to the system simultaneously in large volumes and to access it at high speeds.

Much in the way that a RAID 5 storage array "stripes" data across multiple disks to gain protection from failures, GFS distributes files in fixed-size chunks which are replicated across a cluster of servers. Because they're cheap computers using cheap hard drives, some of those servers are bound to fail at one point or another—so GFS is designed to be tolerant of that without losing (too much) data.

But the similarities between RAID and GFS end there, because those servers can be distributed across the network—either within a single physical data center or spread over different data centers, depending on the purpose of the data. GFS is designed primarily for bulk processing of lots of data. Reading data at high speed is what's important, not the speed of access to a particular section of a file, or the speed at which data is written to the file system. GFS provides that high output at the expense of more fine-grained reads and writes to files and more rapid writing of data to disk. As Ghemawat and company put it in their paper, "small writes at arbitrary positions in a file are supported, but do not have to be efficient."

This distributed nature, along with the sheer volume of data GFS handles—millions of files, most of them larger than 100 megabytes and generally ranging into gigabytes—requires some trade-offs that make GFS very much unlike the sort of file system you'd normally mount on a single server. Because hundreds of individual processes might be writing to or reading from a file simultaneously, GFS needs to supports "atomicity" of data—rolling back writes that fail without impacting other applications. And it needs to maintain data integrity with a very low synchronization overhead to avoid dragging down performance.

GFS consists of three layers: a GFS client, which handles requests for data from applications; a master, which uses an in-memory index to track the names of data files and the location of their chunks; and the "chunk servers" themselves. Originally, for the sake of simplicity, GFS used a single master for each cluster, so the system was designed to get the master out of the way of data access as much as possible. Google has since developed a distributed master system that can handle hundreds of masters, each of which can handle about 100 million files.

When the GFS client gets a request for a specific data file, it requests the location of the data from the master server. The master server provides the location of one of the replicas, and the client then communicates directly with that chunk server for reads and writes during the rest of that particular session. The master doesn't get involved again unless there's a failure.

To ensure that the data firehose is highly available, GFS trades off some other things—like consistency across replicas. GFS does enforce data's atomicity—it will return an error if a write fails, then rolls the write back in metadata and promotes a replica of the old data, for example. But the master's lack of involvement in data writes means that as data gets written to the system, it doesn't immediately get replicated across the whole GFS cluster. The system follows what Google calls a "relaxed consistency model" out of the necessities of dealing with simultaneous access to data and the limits of the network.

This means that GFS is entirely okay with serving up stale data from an old replica if that's what's the most available at the moment—so long as the data eventually gets updated. The master tracks changes, or "mutations," of data within chunks using version numbers to indicate when the changes happened. As some of the replicas get left behind (or grow "stale"), the GFS master makes sure those chunks aren't served up to clients until they're first brought up-to-date.

But that doesn't necessarily happen with sessions already connected to those chunks. The metadata about changes doesn't become visible until the master has processed changes and reflected them in its metadata. That metadata also needs to be replicated in multiple locations in case the master fails—because otherwise the whole file system is lost. And if there's a failure at the master in the middle of a write, the changes are effectively lost as well. This isn't a big problem because of the way that Google deals with data: the vast majority of data used by its applications rarely changes, and when it does data is usually appended rather than modified in place.

While GFS was designed for the apps Google ran in 2003, it wasn't long before Google started running into scalability issues. Even before the company bought YouTube, GFS was starting to hit the wall—largely because the new applications Google was adding didn't work well with the ideal 64-megabyte file size. To get around that, Google turned to Bigtable, a table-based data store that vaguely resembles a database and sits atop GFS. Like GFS below it, Bigtable is mostly write-once, so changes are stored as appends to the table—which Google uses in applications like Google Docs to handle versioning, for example.

The foregoing is mostly academic if you don't work at Google (though it may help users of AppEngine, Google Cloud Storage and other Google services to understand what's going on under the hood a bit better). While Google Cloud Storage provides a public way to store and access objects stored in GFS through a Web interface, the exact interfaces and tools used to drive GFS within Google haven't been made public. But the paper describing GFS led to the development of a more widely used distributed file system that behaves a lot like it: the Hadoop Distributed File System.

Hadoop DFS

Developed in Java and open-sourced as a project of the Apache Foundation, Hadoop has developed such a following among Web companies and others coping with "big data" problems that it has been described as the "Swiss army knife of the 21st Century." All the hype means that sooner or later, you're more likely to find yourself dealing with Hadoop in some form than with other distributed file systems—especially when Microsoft starts shipping it as an Windows Server add-on.

Named by developer Doug Cutting after his son's stuffed elephant, Hadoop was "inspired" by GFS and Google's MapReduce distributed computing environment. In 2004, as Cutting and others working on the Apache Nutch search engine project sought a way to bring the crawler and indexer up to "Web scale," Cutting read Google's papers on GFS and MapReduce and started to work on his own implementation. While most of the enthusiasm for Hadoop comes from Hadoop's distributed data processing capability, derived from its MapReduce-inspired distributed processing management, the Hadoop Distributed File System is what handles the massive data sets it works with.

Hadoop is developed under the Apache license, and there are a number of commercial and free distributions available. The distribution I worked with was from Cloudera (Doug Cutting's current employer)—the Cloudera Distribution Including Apache Hadoop (CDH), the open-source version of Cloudera's enterprise platform, and Cloudera Service and Configuration Express Edition, which is free for up to 50 nodes.

HortonWorks, the company with which Microsoft has aligned to help move Hadoop to Azure and Windows Server (and home to much of the original Yahoo team that worked on Hadoop), has its own Hadoop-based HortonWorks Data Platform in a limited "technology preview" release. There's also a Debian package of the Apache Core, and a number of other open-source and commercial products that are based on Hadoop in some form.

HDFS can be used to support a wide range of applications where high volumes of cheap hardware and big data collide. But because of its architecture, it's not exactly well-suited to general purpose data storage, and it gives up a certain amount of flexibility. HDFS has to do away with certain things usually associated with file systems in order to make sure it can perform well with massive amounts of data spread out over hundreds, or even thousands, of physical machines—things like interactive access to data.

While Hadoop runs in Java, there are a number of ways to interact with HDFS besides its Java API. There's a C-wrapped version of the API, a command line interface through Hadoop, and files can be browsed through HTTP requests. There's also MountableHDFS, an add-on based on FUSE that allows HDFS to be mounted as a file system by most operating systems. Developers are working on a WebDAV interface as well to allow Web-based writing of data to the system.

HDFS follows the architectural path laid out by Google's GFS fairly closely, following its three-tiered, single master model. Each Hadoop cluster has a master server called the "NameNode" which tracks the metadata about the location and replication state of each 64-megabyte "block" of storage. Data is replicated across the "DataNodes" in the cluster—the slave systems that handle data reads and writes. Each block is replicated three times by default, though the number of replicas can be increased by changing the configuration of the cluster.

As in GFS, HDFS gets the master server out of the read-write loop as quickly as possible to avoid creating a performance bottleneck. When a request is made to access data from HDFS, the NameNode sends back the location information for the block on the DataNode that is closest to where the request originated. The NameNode also tracks the health of each DataNode through a "heartbeat" protocol and stops sending requests to DataNodes that don't respond, marking them "dead."

After the handoff, the NameNode doesn't handle any further interactions. Edits to data on the DataNodes are reported back to the NameNode and recorded in a log, which then guides replication across the other DataNodes with replicas of the changed data. As with GFS, this results in a relatively lazy form of consistency, and while the NameNode will steer new requests to the most recently modified block of data, jobs in progress will still hit stale data on the DataNodes they've been assigned to.

That's not supposed to happen much, however, as HDFS data is supposed to be "write once"—changes are usually appended to the data, rather than overwriting existing data, making for simpler consistency. And because of the nature of Hadoop applications, data tends to get written to HDFS in big batches.

When a client sends data to be written to HDFS, it first gets staged in a temporary local file by the client application until the data written reaches the size of a data block—64 megabytes, by default. Then the client contacts the NameNode and gets back a datanode and block location to write the data to. The process is repeated for each block of data committed, one block at a time. This reduces the amount of network traffic created, and it slows down the write process as well. But HDFS is all about the reads, not the writes.

Another way HDFS can minimize the amount of write traffic over the network is in how it handles replication. By activating an HDFS feature called "rack awareness" to manage distribution of replicas, an administrator can specify a rack ID for each node, designating where it is physically located through a variable in the network configuration script. By default, all nodes are in the same "rack." But when rack awareness is configured, HDFS places one replica of each block on another node within the same data center rack, and another in a different rack to minimize the amount of data-writing traffic across the network—based on the reasoning that the chance of a whole rack failure is less likely than the failure of a single node. In theory, this improves overall write performance to HDFS without sacrificing reliability.

As with the early version of GFS, HDFS's NameNode potentially creates a single point of failure for what's supposed to be a highly available and distributed system. If the metadata in the NameNode is lost, the whole HDFS environment becomes essentially unreadable—like a hard disk that has lost its file allocation table. HDFS supports using a "backup node," which keeps a synchronized version of the NameNode's metadata in-memory, and stores snap-shots of previous states of the system so that it can be rolled back if necessary. Snapshots can also be stored separately on what's called a "checkpoint node." However, according to the HDFS documentation, there's currently no support within HDFS for automatically restarting a crashed NameNode, and the backup node doesn't automatically kick in and replace the master.

HDFS and GFS were both engineered with search-engine style tasks in mind. But for cloud services targeted at more general types of computing, the "write once" approach and other compromises made to ensure big data query performance are less than ideal—which is why Amazon developed its own distributed storage platform, called Dynamo.

Amazon's S3 and Dynamo

As Amazon began to build its Web services platform, the company had much different application issues than Google.

Until recently, like GFS, Dynamo hasn't been directly exposed to customers. As Amazon CTO Werner Vogels explained in his blog in 2007, it is the underpinning of storage services and other parts of Amazon Web Services that are highly exposed to Amazon customers, including Amazon's Simple Storage Service (S3) and SimpleDB. But on January 18 of this year, Amazon launched a database service called DynamoDB, based on the latest improvements to Dynamo. It gave customers a direct interface as a "NoSQL" database.

Dynamo has a few things in common with GFS and HDFS: it's also designed with less concern for consistency of data across the system in exchange for high availability, and to run on Amazon's massive collection of commodity hardware. But that's where the similarities start to fade away, because Amazon's requirements for Dynamo were totally different.

Amazon needed a file system that could deal with much more general purpose data access—things like Amazon's own e-commerce capabilities, including customer shopping carts, and other very transactional systems. And the company needed much more granular and dynamic access to data. Rather than being optimized for big streams of data, the need was for more random access to smaller components, like the sort of access used to serve up webpages.

According to the paper presented by Vogels and his team at the Symposium on Operating Systems Principles conference in October 2007, "Dynamo targets applications that need to store objects that are relatively small (usually less than 1 MB)." And rather than being optimized for reads, Dynamo is designed to be "always writeable," being highly available for data input—precisely the opposite of Google's model.

"For a number of Amazon services," the Amazon Dynamo team wrote in their paper, "rejecting customer updates could result in a poor customer experience. For instance, the shopping cart service must allow customers to add and remove items from their shopping cart even amidst network and server failures." At the same time, the services based on Dynamo can be applied to much larger data sets—in fact, Amazon offers the Hadoop-based Elastic MapReduce service based on S3 atop of Dynamo.

In order to meet those requirements, Dynamo's architecture is almost the polar opposite of GFS—it more closely resembles a peer-to-peer system than the master-slave approach. Dynamo also flips how consistency is handled, moving away from having the system resolve replication after data is written, and instead doing conflict resolution on data when executing reads. That way, Dynamo never rejects a data write, regardless of whether it's new data or a change to existing data, and the replication catches up later.

Because of concerns about the pitfalls of a central master server failure (based on previous experiences with service outages), and the pace at which Amazon adds new infrastructure to its cloud, Vogel's team chose a decentralized approach to replication. It was based on a self-governing data partitioning scheme that used the concept of consistent hashing. The resources within each Dynamo cluster are mapped as a continuous circle of address spaces, and each storage node in the system is given a random value as it is added to the cluster—a value that represents its "position" on the Dynamo ring. Based on the number of storage nodes in the cluster, each node takes responsibility for a chunk of address spaces based on its position. As storage nodes are added to the ring, they take over chunks of address space and the nodes on either side of them in the ring adjust their responsibility. Since Amazon was concerned about unbalanced loads on storage systems as newer, better hardware was added to clusters, Dynamo allows multiple virtual nodes to be assigned to each physical node, giving bigger systems a bigger share of the address space in the cluster.

When data gets written to Dynamo—through a "put" request—the systems assigns a key to the data object being written. That key gets run through a 128-bit MD5 hash; the value of the hash is used as an address within the ring for the data. The data node responsible for that address becomes the "coordinator node" for that data and is responsible for handling requests for it and prompting replication of the data to other nodes in the ring, as shown in the Amazon diagram below:

This spreads requests out across all the nodes in the system. In the event of a failure of one of the nodes, its virtual neighbors on the ring start picking up requests and fill in the vacant space with their replicas.

Then there's Dynamo's consistency-checking scheme. When a "get" request comes in from a client application, Dynamo polls its nodes to see who has a copy of the requested data. Each node with a replica responds, providing information about when its last change was made, based on a vector clock—a versioning system that tracks the dependencies of changes to data. Depending on how the polling is configured, the request handler can wait to get just the first response back and return it (if the application is in a hurry for any data and there's low risk of a conflict—like in a Hadoop application) or it can wait for two, three, or more responses. For multiple responses from the storage nodes, the handler checks to see which is most up-to-date and alerts the nodes that are stale to copy the data from the most current, or it merges versions that have non-conflicting edits. This scheme works well for resiliency under most circumstances—if nodes die, and new ones are brought online, the latest data gets replicated to the new node.

The most recent improvements in Dynamo, and the creation of DynamoDB, were the result of looking at why Amazon's internal developers had not adopted Dynamo itself as the base for their applications, and instead relied on the services built atop it—S3, SimpleDB, and Elastic Block Storage. The problems that Amazon faced in its April 2011 outage were the result of replication set up between clusters higher in the application stack—in Amazon's Elastic Block Storage, where replication overloaded the available additional capacity, rather than because of problems with Dynamo itself.

The overall stability of Dynamo has made it the inspiration for open-source copycats just as GFS did. Facebook relies on Cassandra, now an Apache project, which is based on Dynamo. Basho's Riak "NoSQL" database also is derived from the Dynamo architecture.

Microsoft's Azure DFS

When Microsoft launched the Azure platform-as-a-service, it faced a similar set of requirements to those of Amazon—including massive amounts of general-purpose storage. But because it's a PaaS, Azure doesn't expose as much of the infrastructure to its customers as Amazon does with EC2. And the service has the benefit of being purpose-built as a platform to serve cloud customers instead of being built to serve a specific internal mission first.

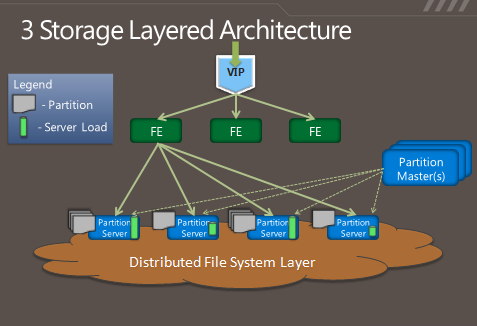

So in some respects, Azure's storage architecture resembles Amazon's—it's designed to handle a variety of sizes of "blobs," tables, and other types of data, and to provide quick access at a granular level. But instead of handling the logical and physical mapping of data at the storage nodes themselves, Azure's storage architecture separates the logical and physical partitioning of data into separate layers of the system. While incoming data requests are routed based on a logical address, or "partition," the distributed file system itself is broken into gigabyte-sized chunks, or "extents." The result is a sort of hybrid of Amazon's and Google's approaches, illustrated in this diagram from Microsoft:

As Microsoft's Brad Calder describes in his overview of Azure's storage architecture, Azure uses a key system similar to that used in Dynamo to identify the location of data. But rather than having the application or service contact storage nodes directly, the request is routed through a front-end layer that keeps a map of data partitions in a role similar to that of HDFS's NameNode. Unlike HDFS, Azure uses multiple front-end servers, load balancing requests across them. The front-end server handles all of the requests from the client application authenticating the request, and handles communicating with the next layer down—the partition layer.

Each logical chunk of Azure's storage space is managed by a partition server, which tracks which extents within the underlying DFS hold the data. The partition server handles the reads and writes for its particular set of storage objects. The physical storage of those objects is spread across the DFS' extents, so all partition servers each have access to all of the extents in the DFS. In addition to buffering the DFS from the front-end servers's read and write requests, the partition servers also cache requested data in memory, so repeated requests can be responded to without having to hit the underlying file system. That boosts performance for small, frequent requests like those used to render a webpage.

All of the metadata for each partition is replicated back to a set of "partition master" servers, providing a backup of the information if a partition server fails—if one goes down, its partitions are passed off to other partition servers dynamically. The partition masters also monitor the workload on each partition server in the Azure storage cluster; if a particular partition server is becoming overloaded, the partition master can dynamically re-assign partitions.

Azure is unlike the other big DFS systems in that it more tightly enforces consistency of data writes. Replication of data happens when writes are sent to the DFS, but it's not the lazy sort of replication that is characteristic of GFS and HDFS. Each extent of storage is managed by a primary DFS server and replicated to multiple secondaries; one DFS server may be a primary for a subset of extents and a secondary server for others. When a partition server passes a write request to DFS, it contacts the primary server for the extent the data is being written to, and the primary passes the write to its secondaries. The write is only reported as successful when the data has been replicated successfully to three secondary servers.

As with the partition layer, Azure DFS uses load balancing on the physical layer in an attempt to prevent systems from getting jammed with too much I/O. Each partition server monitors the workload on the primary extent servers it accesses; when a primary DFS server starts to red-line, the partition server starts redirecting read requests to secondary servers, and redirecting writes to extents on other servers.

The next level of "distributed"

Distributed file systems are hardly a guarantee of perpetual uptime. In most cases, DFS's only replicate within the same data center because of the amount of bandwidth required to keep replicas in sync. But replication within the data center, for example doesn't help when the whole data center gets taken offline or a backup network switch fails to kick in when the primary fails. In August, Microsoft and Amazon both had data centers in Dublin taken offline by a transformer explosion—which created a spike that kept backup generators from starting.

Systems that are lazier about replication, such as GFS and Hadoop, can asynchronously handle replication between two data centers; for example, using "rack awareness," Hadoop clusters can be configured to point to a DataNode offsite, and metadata can be passed to a remote checkpoint or backup node (at least in theory). But for more dynamic data, that sort of replication can be difficult to manage.

That's one of the reasons Microsoft released a feature called "geo-replication" in September. Geo-replication is a feature that will sync customers' data between two data center locations hundred of miles apart. Rather than using the tightly coupled replication Microsoft uses within the data center, geo-replication happens asynchronously. Both of the Azure data centers have to be in the same region; for example, data for an application set up through the Azure Portal at the North Central US data center can be replicated to the South Central US.

In Amazon's case, the company does replication across availability zones at a service level rather than down in the Dynamo architecture. While Amazon hasn't published how it handles its own geo-replication, it provides customers with the ability to "snap shot" their EBS storage to a remote S3 data "bucket."

And that's the approach Amazon and Google have generally taken in evolving their distributed file systems: making the fixes in the services based on them, rather than in the underlying architecture. While Google has added a distributed master system to GFS and made other tweaks to accommodate its ever-growing data flows, the fundamental architecture of Google's system is still very much like it was in 2003.

But in the long term, the file systems themselves may become more focused on being an archive of data than something applications touch directly. In an interview with Ars, database pioneer (and founder of VoltDB) Michael Stonebraker said that as data volumes continue to go up for "big data" applications, server memory is becoming "the new disk" and file systems are becoming where the log for application activity gets stored—"the new tape." As the cloud giants push for more power efficiency and performance from their data centers, they have already moved increasingly toward solid-state drives and larger amounts of system memory.

Tuesday, January 24. 2012

A tale of Apple, the iPhone, and overseas manufacturing

Via CNET

-----

Workers assemble and perform quality control checks on MacBook Pro display enclosures at an Apple supplier facility in Shanghai.

(Credit: Apple)

A new report on Apple offers up an interesting detail about the evolution of the iPhone and gives a fascinating--and unsettling--look at the practice of overseas manufacturing.

The article, an in-depth report by Charles Duhigg and Keith Bradsher of The New York Times, is based on interviews with, among others, "more than three dozen current and former Apple employees and contractors--many of whom requested anonymity to protect their jobs."

The piece uses Apple and its recent history to look at why the success of some U.S. firms hasn't led to more U.S. jobs--and to examine issues regarding the relationship between corporate America and Americans (as well as people overseas). One of the questions it asks is: Why isn't more manufacturing taking place in the U.S.? And Apple's answer--and the answer one might get from many U.S. companies--appears to be that it's simply no longer possible to compete by relying on domestic factories and the ecosystem that surrounds them.

The iPhone detail crops up relatively early in the story, in an anecdote about then-Apple CEO Steve Jobs. And it leads directly into questions about offshore labor practices:

In 2007, a little over a month before the iPhone was scheduled to appear in stores, Mr. Jobs beckoned a handful of lieutenants into an office. For weeks, he had been carrying a prototype of the device in his pocket.

Mr. Jobs angrily held up his iPhone, angling it so everyone could see the dozens of tiny scratches marring its plastic screen, according to someone who attended the meeting. He then pulled his keys from his jeans.

People will carry this phone in their pocket, he said. People also carry their keys in their pocket. "I won't sell a product that gets scratched," he said tensely. The only solution was using unscratchable glass instead. "I want a glass screen, and I want it perfect in six weeks."

A tall order. And another anecdote suggests that Jobs' staff went overseas to fill it--along with other requirements for the top-secret phone project (code-named, the Times says, "Purple 2"):

One former executive described how the company relied upon a Chinese factory to revamp iPhone manufacturing just weeks before the device was due on shelves. Apple had redesigned the iPhone's screen at the last minute, forcing an assembly line overhaul. New screens began arriving at the plant near midnight.

A foreman immediately roused 8,000 workers inside the company's dormitories, according to the executive. Each employee was given a biscuit and a cup of tea, guided to a workstation and within half an hour started a 12-hour shift fitting glass screens into beveled frames. Within 96 hours, the plant was producing more than 10,000 iPhones a day.

"The speed and flexibility is breathtaking," the executive said. "There's no American plant that can match that."

That last quote there, like several others in the story, leaves one feeling almost impressed by the no-holds-barred capabilities of these manufacturing plants--impressed and queasy at the same time. Here's another quote, from Jennifer Rigoni, Apple's worldwide supply demand manager until 2010: "They could hire 3,000 people overnight," she says, speaking of Foxconn City, Foxconn Technology's complex of factories in China. "What U.S. plant can find 3,000 people overnight and convince them to live in dorms?"

The article says that cheap and willing labor was indeed a factor in Apple's decision, in the early 2000s, to follow most other electronics companies in moving manufacturing overseas. But, it says, supply chain management, production speed, and flexibility were bigger incentives.

"The entire supply chain is in China now," the article quotes a former high-ranking Apple executive as saying. "You need a thousand rubber gaskets? That's the factory next door. You need a million screws? That factory is a block away. You need that screw made a little bit different? It will take three hours."

It also makes the point that other factors come into play. Apple analysts, the Times piece reports, had estimated that in the U.S., it would take the company as long as nine months to find the 8,700 industrial engineers it would need to oversee workers assembling the iPhone. In China it wound up taking 15 days.

The article and its sources paint a vivid picture of how much easier it is for companies to get things made overseas (which is why so many U.S. firms go that route--Apple is by no means alone in this). But the underlying humanitarian issues nag at the reader.

Perhaps there's hope--at least for overseas workers--in last week's news that Apple has joined the Fair Labor Association, and that it will be providing more transparency when it comes to the making of its products.

As for manufacturing returning to the U.S.? The Times piece cites an unnamed guest at President Obama's 2011 dinner with Silicon Valley bigwigs. Obama had asked Steve Jobs what it would take to produce the iPhone in the states, why that work couldn't return. The Times' source quotes Jobs as having said, in no uncertain terms, "Those jobs aren't coming back."

Apple, by the way, would not provide a comment to the Times about the article. And Foxconn disputed the story about employees being awakened at midnight to work on the iPhone, saying strict regulations about working hours would have made such a thing impossible.

Monday, January 23. 2012

Quantum physics enables perfectly secure cloud computing

Via eurekalert

-----

Researchers have succeeded in combining the power of quantum computing with the security of quantum cryptography and have shown that perfectly secure cloud computing can be achieved using the principles of quantum mechanics. They have performed an experimental demonstration of quantum computation in which the input, the data processing, and the output remain unknown to the quantum computer. The international team of scientists will publish the results of the experiment, carried out at the Vienna Center for Quantum Science and Technology (VCQ) at the University of Vienna and the Institute for Quantum Optics and Quantum Information (IQOQI), in the forthcoming issue of Science.

Quantum computers are expected to play an important role in future information processing since they can outperform classical computers at many tasks. Considering the challenges inherent in building quantum devices, it is conceivable that future quantum computing capabilities will exist only in a few specialized facilities around the world – much like today's supercomputers. Users would then interact with those specialized facilities in order to outsource their quantum computations. The scenario follows the current trend of cloud computing: central remote servers are used to store and process data – everything is done in the "cloud." The obvious challenge is to make globalized computing safe and ensure that users' data stays private.

The latest research, to appear in Science, reveals that quantum computers can provide an answer to that challenge. "Quantum physics solves one of the key challenges in distributed computing. It can preserve data privacy when users interact with remote computing centers," says Stefanie Barz, lead author of the study. This newly established fundamental advantage of quantum computers enables the delegation of a quantum computation from a user who does not hold any quantum computational power to a quantum server, while guaranteeing that the user's data remain perfectly private. The quantum server performs calculations, but has no means to find out what it is doing – a functionality not known to be achievable in the classical world.

The scientists in the Vienna research group have demonstrated the concept of "blind quantum computing" in an experiment: they performed the first known quantum computation during which the user's data stayed perfectly encrypted. The experimental demonstration uses photons, or "light particles" to encode the data. Photonic systems are well-suited to the task because quantum computation operations can be performed on them, and they can be transmitted over long distances.

The process works in the following manner. The user prepares qubits – the fundamental units of quantum computers – in a state known only to himself and sends these qubits to the quantum computer. The quantum computer entangles the qubits according to a standard scheme. The actual computation is measurement-based: the processing of quantum information is implemented by simple measurements on qubits. The user tailors measurement instructions to the particular state of each qubit and sends them to the quantum server. Finally, the results of the computation are sent back to the user who can interpret and utilize the results of the computation. Even if the quantum computer or an eavesdropper tries to read the qubits, they gain no useful information, without knowing the initial state; they are "blind."

###

The research at the Vienna Center for Quantum Science and Technology (VCQ) at the University of Vienna and at the Institute for Quantum Optics and Quantum Information (IQOQI) of the Austrian Academy of Sciences was undertaken in collaboration with the scientists who originally invented the protocol, based at the University of Edinburgh, the Institute for Quantum Computing (University of Waterloo), the Centre for Quantum Technologies (National University of Singapore), and University College Dublin.

Publication: "Demonstration of Blind Quantum Computing" Stefanie Barz, Elham Kashefi, Anne Broadbent, Joseph Fitzsimons, Anton Zeilinger, Philip Walther. DOI: 10.1126/science.1214707

Tuesday, January 17. 2012

HTML bringing to us old boot sessions

For anyone of us who thinks that past was better... or to show to new comers that, some time ago, a computer device was not supposed to be always switched on!

Quicksearch

Popular Entries

- The great Ars Android interface shootout (128960)

- MeCam $49 flying camera concept follows you around, streams video to your phone (97445)

- Norton cyber crime study offers striking revenue loss statistics (93471)

- The PC inside your phone: A guide to the system-on-a-chip (55328)

- Norton cyber crime study offers striking revenue loss statistics (49700)

Categories

Show tagged entries

Syndicate This Blog

Calendar

|

|

April '24 | |||||

| Mon | Tue | Wed | Thu | Fri | Sat | Sun |

| 1 | 2 | 3 | 4 | 5 | 6 | 7 |

| 8 | 9 | 10 | 11 | 12 | 13 | 14 |

| 15 | 16 | 17 | 18 | 19 | 20 | 21 |

| 22 | 23 | 24 | 25 | 26 | 27 | 28 |

| 29 | 30 | |||||