Tuesday, December 18. 2012

Depixelizing pixel art

-----

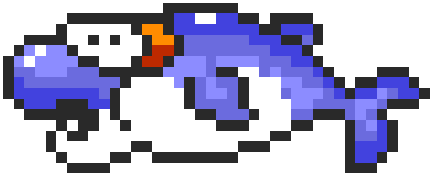

Naïve upsampling of pixel art images leads to unsatisfactory results.

Our algorithm extracts a smooth, resolution-independent vector

representation from the image which is suitable for high-resolution

display devices (Image © Nintendo Co., Ltd.).

Abstract

We describe a novel algorithm for extracting a resolution-independent vector representation from pixel art

images, which enables magnifying the results by an arbitrary amount

without image degradation. Our algorithm resolves pixel-scale features

in the input and converts them into regions with smoothly varying

shading that are crisply separated by piecewise-smooth contour curves.

In the original image, pixels are represented on a square pixel lattice,

where diagonal neighbors are only connected through a single point.

This causes thin features to become visually disconnected under

magnification by conventional means, and it causes connectedness and

separation of diagonal neighbors to be ambiguous. The key to our

algorithm is in resolving these ambiguities. This enables us to reshape

the pixel cells so that neighboring pixels belonging to the same feature

are connected through edges, thereby preserving the feature

connectivity under magnification. We reduce pixel aliasing artifacts and

improve smoothness by fitting spline curves to contours in the image

and optimizing their control points.

Paper

Paper

Full Resolution (4.16 MB)

Supplementary Material

Supplementary Material

Click here

Wednesday, December 12. 2012

Why the world is arguing over who runs the internet

Via NewScientist

-----

The ethos of freedom from control that underpins the web is facing its first serious test, says Wendy M. Grossman

WHO runs the internet? For the past 30 years, pretty much no one. Some governments might call this a bug, but to the engineers who designed the protocols, standards, naming and numbering systems of the internet, it's a feature.

The goal was to build a network that could withstand damage and would enable the sharing of information. In that, they clearly succeeded - hence the oft-repeated line from John Gilmore, founder of digital rights group Electronic Frontier Foundation: "The internet interprets censorship as damage and routes around it." These pioneers also created a robust platform on which a guy in a dorm room could build a business that serves a billion people.

But perhaps not for much longer. This week, 2000 people have gathered for the World Conference on International Telecommunications (WCIT) in Dubai in the United Arab Emirates to discuss, in part, whether they should be in charge.

The stated goal of the Dubai meeting is to update the obscure International Telecommunications Regulations (ITRs), last revised in 1988. These relate to the way international telecom providers operate. In charge of this process is the International Telecommunications Union (ITU), an agency set up in 1865 with the advent of the telegraph. Its $200 million annual budget is mainly funded by membership fees from 193 countries and about 700 companies. Civil society groups are only represented if their governments choose to include them in their delegations. Some do, some don't. This is part of the controversy: the WCIT is effectively a closed shop.

Vinton Cerf, Google's chief internet evangelist and co-inventor of the TCP/IP internet protocols, wrote in May that decisions in Dubai "have the potential to put government handcuffs on the net".

The need to update the ITRs isn't surprising. Consider what has happened since 1988: the internet, Wi-Fi, broadband, successive generations of mobile telephony, international data centres, cloud computing. In 1988, there were a handful of telephone companies - now there are thousands of relevant providers.

Controversy surrounding the WCIT gathering has been building for months. In May, 30 digital and human rights organisations from all over the world wrote to the ITU with three demands: first, that it publicly release all preparatory documents and proposals; second, that it open the process to civil society; and third that it ask member states to solicit input from all interested groups at national level. In June, two academics at George Mason University in Virginia - Jerry Brito and Eli Dourado - set up the WCITLeaks site, soliciting copies of the WCIT documents and posting those they received. There were still gaps in late November when .nxt, a consultancy firm and ITU member, broke ranks and posted the lot on its own site.

The issue entered the mainstream when Greenpeace and the International Trade Union Confederation (ITUC) launched the Stop the Net Grab campaign, demanding that the WCIT be opened up to outsiders. At the launch of the campaign on 12 November, Sharan Burrow, general secretary of the ITUC, pledged to fight for as long it took to ensure an open debate on whether regulation was necessary. "We will stay the distance," she said.

This marks the first time that such large, experienced, international campaigners, whose primary work has nothing to do with the internet, have sought to protect its freedoms. This shows how fundamental a technology the internet has become.

A week later, the European parliament passed a resolution stating that the ITU was "not the appropriate body to assert regulatory authority over either internet governance or internet traffic flows", opposing any efforts to extend the ITU's scope and insisting that its human rights principles took precedence. The US has always argued against regulation.

Efforts by ITU secretary general Hamadoun Touré to spread calm have largely failed. In October, he argued that extending the internet to the two-thirds of the world currently without access required the UN's leadership. Elsewhere, he has repeatedly claimed that the more radical proposals on the table in Dubai would not be passed because they would require consensus.

These proposals raise two key fears for digital rights campaigners. The first concerns censorship and surveillance: some nations, such as Russia, favour regulation as a way to control or monitor content transiting their networks.

The second is financial. Traditional international calls attract settlement fees, which are paid by the operator in the originating country to the operator in the terminating country for completing the call. On the internet, everyone simply pays for their part of the network, and ISPs do not charge to carry each other's traffic. These arrangements underpin network neutrality, the principle that all packets are delivered equally on a "best efforts" basis. Regulation to bring in settlement costs would end today's free-for-all, in which anyone may set up a site without permission. Small wonder that Google is one of the most vocal anti-WCIT campaigners.

How worried should we be? Well, the ITU cannot enforce its decisions, but, as was pointed out at the Stop the Net Grab launch, the system is so thoroughly interconnected that there is plenty of scope for damage if a few countries decide to adopt any new regulatory measures.

This is why so many people want to be represented in a dull, lengthy process run by an organisation that may be outdated to revise regulations that can be safely ignored. If you're not in the room you can't stop the bad stuff.

Wendy M. Grossman is a science writer and the author of net.wars (NYU Press)

Thursday, December 06. 2012

Temporary tatto as a medical sensor

Via FUTURITY

-----

A medical sensor that attaches to the skin like a temporary tattoo could make it easier for doctors to detect metabolic problems in patients and for coaches to fine-tune athletes’ training routines. And the entire sensor comes in a thin, flexible package shaped like a smiley face. (Credit: University of Toronto)

It looks like a smiley face tattoo, but a new easy-to-apply sensor can detect medical problems and help athletes fine-tune training routines.

“We wanted a design that could conceal the electrodes,” says Vinci Hung, a PhD candidate in physical and environmental sciences at the University of Toronto, who helped create the new sensor. “We also wanted to showcase the variety of designs that can be accomplished with this fabrication technique.”

The tattoo, which is an ion-selective electrode (ISE), is made using standard screen printing technique and commercially available transfer tattoo paper—the same kind of paper that usually carries tattoos of Spiderman or Disney princesses.

In the case of the sensor, the “eyes” function as the working and reference electrodes, and the “ears” are contacts for a measurement device to connect to.

Hung contributed to the work while in the lab of Joseph Wang, a professor at the University of California, San Diego. The sensor she helped make can detect changes in the skin’s pH levels in response to metabolic stress from exertion.

Similar devices are already used by medical researchers and athletic trainers. They can give clues to underlying metabolic diseases such as Addison’s disease, or simply signal whether an athlete is fatigued or dehydrated during training. The devices are also useful in the cosmetics industry for monitoring skin secretions.

But existing devices can be bulky or hard to keep adhered to sweating skin. The new tattoo-based sensor stayed in place during tests, and continued to work even when the people wearing them were exercising and sweating extensively.

The tattoos were applied in a similar way to regular transfer tattoos, right down to using a paper towel soaked in warm water to remove the base paper.

To make the sensors, Hung and colleagues used a standard screen printer to lay down consecutive layers of silver, carbon fiber-modified carbon and insulator inks, followed by electropolymerization of aniline to complete the sensing surface.

By using different sensing materials, the tattoos can also be modified to detect other components of sweat, such as sodium, potassium, or magnesium, all of which are of potential interest to researchers in medicine and cosmetology.

An article describing the work has been accepted for publication in the journal Analyst.

Tuesday, December 04. 2012

The Relevance of Algorithms

By Tarleton Gillespie

-----

I’m really excited to share my new essay, “The Relevance of Algorithms,” with those of you who are interested in such things. It’s been a treat to get to think through the issues surrounding algorithms and their place in public culture and knowledge, with some of the participants in Culture Digitally (here’s the full litany: Braun, Gillespie, Striphas, Thomas, the third CD podcast, and Anderson‘s post just last week), as well as with panelists and attendees at the recent 4S and AoIR conferences, with colleagues at Microsoft Research, and with all of you who are gravitating towards these issues in their scholarship right now.

The motivation of the essay was two-fold: first, in my research on online platforms and their efforts to manage what they deem to be “bad content,” I’m finding an emerging array of algorithmic techniques being deployed: for either locating and removing sex, violence, and other offenses, or (more troublingly) for quietly choreographing some users away from questionable materials while keeping it available for others. Second, I’ve been helping to shepherd along this anthology, and wanted my contribution to be in the spirit of the its aims: to take one step back from my research to articulate an emerging issue of concern or theoretical insight that (I hope) will be of value to my colleagues in communication, sociology, science & technology studies, and information science.

The anthology will ideally be out in Fall 2013. And we’re still finalizing the subtitle. So here’s the best citation I have.

Gillespie, Tarleton. “The Relevance of Algorithms. forthcoming, in Media Technologies, ed. Tarleton Gillespie, Pablo Boczkowski, and Kirsten Foot. Cambridge, MA: MIT Press.

Below is the introduction, to give you a taste.

Algorithms play an increasingly important role in selecting what information is considered most relevant to us, a crucial feature of our participation in public life. Search engines help us navigate massive databases of information, or the entire web. Recommendation algorithms map our preferences against others, suggesting new or forgotten bits of culture for us to encounter. Algorithms manage our interactions on social networking sites, highlighting the news of one friend while excluding another’s. Algorithms designed to calculate what is “hot” or “trending” or “most discussed” skim the cream from the seemingly boundless chatter that’s on offer. Together, these algorithms not only help us find information, they provide a means to know what there is to know and how to know it, to participate in social and political discourse, and to familiarize ourselves with the publics in which we participate. They are now a key logic governing the flows of information on which we depend, with the “power to enable and assign meaningfulness, managing how information is perceived by users, the ‘distribution of the sensible.’” (Langlois 2012)

Algorithms need not be software: in the broadest sense, they are encoded procedures for transforming input data into a desired output, based on specified calculations. The procedures name both a problem and the steps by which it should be solved. Instructions for navigation may be considered an algorithm, or the mathematical formulas required to predict the movement of a celestial body across the sky. “Algorithms do things, and their syntax embodies a command structure to enable this to happen” (Goffey 2008, 17). We might think of computers, then, fundamentally as algorithm machines — designed to store and read data, apply mathematical procedures to it in a controlled fashion, and offer new information as the output.

But as we have embraced computational tools as our primary media of expression, and have made not just mathematics but all information digital, we are subjecting human discourse and knowledge to these procedural logics that undergird all computation. And there are specific implications when we use algorithms to select what is most relevant from a corpus of data composed of traces of our activities, preferences, and expressions.

These algorithms, which I’ll call public relevance algorithms, are — by the very same mathematical procedures — producing and certifying knowledge. The algorithmic assessment of information, then, represents a particular knowledge logic, one built on specific presumptions about what knowledge is and how one should identify its most relevant components. That we are now turning to algorithms to identify what we need to know is as momentous as having relied on credentialed experts, the scientific method, common sense, or the word of God.

What we need is an interrogation of algorithms as a key feature of our information ecosystem (Anderson 2011), and of the cultural forms emerging in their shadows (Striphas 2010), with a close attention to where and in what ways the introduction of algorithms into human knowledge practices may have political ramifications. This essay is a conceptual map to do just that. I will highlight six dimensions of public relevance algorithms that have political valence:

1. Patterns of inclusion: the choices behind what makes it into an index in the first place, what is excluded, and how data is made algorithm ready

2. Cycles of anticipation: the implications of algorithm providers’ attempts to thoroughly know and predict their users, and how the conclusions they draw can matter

3. The evaluation of relevance: the criteria by which algorithms determine what is relevant, how those criteria are obscured from us, and how they enact political choices about appropriate and legitimate knowledge

4. The promise of algorithmic objectivity: the way the technical character of the algorithm is positioned as an assurance of impartiality, and how that claim is maintained in the face of controversy

5. Entanglement with practice: how users reshape their practices to suit the algorithms they depend on, and how they can turn algorithms into terrains for political contest, sometimes even to interrogate the politics of the algorithm itself

6. The production of calculated publics: how the algorithmic presentation of publics back to themselves shape a public’s sense of itself, and who is best positioned to benefit from that knowledge.

Considering how fast these technologies and the uses to which they are put are changing, this list must be taken as provisional, not exhaustive. But as I see it, these are the most important lines of inquiry into understanding algorithms as emerging tools of public knowledge and discourse.

It would also be seductively easy to get this wrong. In attempting to say something of substance about the way algorithms are shifting our public discourse, we must firmly resist putting the technology in the explanatory driver’s seat. While recent sociological study of the Internet has labored to undo the simplistic technological determinism that plagued earlier work, that determinism remains an alluring analytical stance. A sociological analysis must not conceive of algorithms as abstract, technical achievements, but must unpack the warm human and institutional choices that lie behind these cold mechanisms. I suspect that a more fruitful approach will turn as much to the sociology of knowledge as to the sociology of technology — to see how these tools are called into being by, enlisted as part of, and negotiated around collective efforts to know and be known. This might help reveal that the seemingly solid algorithm is in fact a fragile accomplishment.

~ ~ ~

Here is the full article [PDF]. Please feel free to share it, or point people to this post.

Quicksearch

Popular Entries

- The great Ars Android interface shootout (130548)

- Norton cyber crime study offers striking revenue loss statistics (100314)

- MeCam $49 flying camera concept follows you around, streams video to your phone (99486)

- The PC inside your phone: A guide to the system-on-a-chip (56810)

- Norton cyber crime study offers striking revenue loss statistics (56543)

Categories

Show tagged entries

Syndicate This Blog

Calendar

|

|

December '12 |

|

||||

| Mon | Tue | Wed | Thu | Fri | Sat | Sun |

| 1 | 2 | |||||

| 3 | 4 | 5 | 6 | 7 | 8 | 9 |

| 10 | 11 | 12 | 13 | 14 | 15 | 16 |

| 17 | 18 | 19 | 20 | 21 | 22 | 23 |

| 24 | 25 | 26 | 27 | 28 | 29 | 30 |

| 31 | ||||||