Entries tagged as hardware

Related tags

3d camera flash game headset history mobile mobile phone software technology tracking virtual reality web wiki www 3d printing 3d scanner crowd-sourcing diy evolution facial copy food innovation&society medecin microsoft physical computing piracy programming rapid prototyping recycling robot virus ad android ai algorythm apple arduino automation data mining data visualisation network neural network sensors siri amd cpu intel qualcomm app google htc ios linux os sdk super collider tablet usb api facial recognition glass interface mirror amazon cloud iphone ar augmented reality satellite army drone artificial intelligence big data car cloud computing privacy program super computer asus kinect light wifi chrome browser firefox ie laptop computing farm open source security sustainabilityWednesday, March 18. 2015

First Ubuntu Phone Landing In Europe Shortly

Via TechCrunch

-----

A year after it revealed another attempt to muscle in on the smartphone market, Canonical’s first Ubuntu-based smartphone is due to go on sale in Europe in the “coming days”, it said today. The device will be sold for €169.90 (~$190) unlocked to any carrier network, although some regional European carriers will be offering SIM bundles at the point of purchase. The hardware is an existing mid-tier device, the Aquaris E4.5, made by Spain’s BQ — with the Ubuntu version of the device known as the ‘Aquaris E4.5 Ubuntu Edition’. So the only difference here is it will be pre-loaded with Ubuntu’s mobile software, rather than Google’s Android platform.

Canonical has been trying to get into the mobile space for a while now. Back in 2013, the open source software maker failed to crowdfund a high end converged smartphone-cum-desktop-computer, called the Ubuntu Edge — a smartphone-sized device that would been powerful enough to transform from a pocket computer into a fully fledged desktop when plugged into a keyboard and monitor, running Ubuntu’s full-fat desktop OS. Canonical had sought to raise a hefty $32 million in crowdfunds to make that project fly. Hence its more modest, mid-tier smartphone debut now.

On the hardware side, Ubuntu’s first smartphone offers pretty bog standard mid-range specs, with a 4.5 inch screen, 1GB RAM, a quad-core A7 chip running at “up to 1.3Ghz”, 8GB of on-board storage, 8MP rear camera and 5MP front-facing lens, plus a dual-SIM slot. But it’s the mobile software that’s the novelty here (demoed in action in Canonical’s walkthrough video, embedded below).

Canonical has created a gesture-based smartphone interface called Scopes, which puts the homescreen focus on on a series of themed cards that aggregate content and which the user swipes between to navigate around the functions of the phone, while app icons are tucked away to the side of the screen, or gathered together on a single Scope card. Examples include a contextual ‘Today’ card which contains info like weather and calendar, or a ‘Nearby’ card for location-specific local services, or a card for accessing ‘Music’ content on the device, or ‘News’ for accessing various articles in one place.

It’s certainly a different approach to the default grid of apps found on iOS and Android but has some overlap with other, alternative platforms such as Palm’s WebOS, or the rebooted BlackBerry OS, or Jolla’s Sailfish. The problem is, as with all such smaller OSes, it will be an uphill battle for Canonical to attract developers to build content for its platform to make it really live and breathe. (It’s got a few third parties offering content at launch — including Songkick, The Weather Channel and TimeOut.) And, crucially, a huge challenge to convince consumers to try something different which requires they learn new mobile tricks. Especially given that people can’t try before they buy — as the device will be sold online only.

Canonical said the Aquaris E4.5 Ubuntu Edition will be made available in a series of flash sales over the coming weeks, via BQ.com. Sales will be announced through Ubuntu and BQ’s social media channels — perhaps taking a leaf out of the retail strategy of Android smartphone maker Xiaomi, which uses online flash sales to hype device launches and shift inventory quickly. Building similar hype in a mature smartphone market like Europe — for mid-tier hardware — is going to a Sisyphean task. But Canonical claims to be in it for the long, uphill haul.

“We are going for the mass market,” Cristian Parrino, its VP of Mobile, told Engadget. “But that’s a gradual process and a thoughtful process. That’s something we’re going to be doing intelligently over time — but we’ll get there.”

Monday, February 02. 2015

ConnectX wants to put server farms in space

Via Geek

-----

The next generation of cloud servers might be deployed where the clouds can be made of alcohol and cosmic dust: in space. That’s what ConnectX wants to do with their new data visualization platform.

Why space? It’s not as though there isn’t room to set up servers here on Earth, what with Germans willing to give up space in their utility rooms in exchange for a bit of ambient heat and malls now leasing empty storefronts to service providers. But there are certain advantages.

The desire to install servers where there’s abundant, free cooling makes plenty of sense. Down here on Earth, that’s what’s driven companies like Facebook to set up shop in Scandinavia near the edge of the Arctic Circle. Space gets a whole lot colder than the Arctic, so from that standpoint the ConnectX plan makes plenty of sense. There’s also virtually no humidity, which can wreak havoc on computers.

They also believe that the zero-g environment would do wonders for the lifespan of the hard drives in their servers, since it could reduce the resistance they encounter while spinning. That’s the same reason Western Digital started filling hard drives with helium.

But what about data transmission? How does ConnectX plan on moving the bits back and forth between their orbital servers and networks back on the ground? Though something similar to NASA’s Lunar Laser Communication Demonstration — which beamed data to the moon 4,800 times faster than any RF system ever managed — seems like a decent option, they’re leaning on RF.

Mind you, it’s a fairly complex setup. ConnectX says they’re polishing a system that “twists” signals to reap massive transmission gains. A similar system demonstrated last year managed to push data over radio waves at a staggering 32gbps, around 30 times faster than LTE.

So ConnectX seems to have that sorted. The only real question is the cost of deployment. Can the potential reduction in long-term maintenance costs really offset the massive expense of actually getting their servers into orbit? And what about upgrading capacity? It’s certainly not going to be nearly as fast, easy, or cheap as it is to do on Earth. That’s up to ConnectX to figure out, and they seem confident that they can make it work.

Wednesday, November 05. 2014

Researchers bridge air gap by turning monitors into FM radios

Via ars technica

-----

A two-stage attack could allow spies to sneak secrets out of the most sensitive buildings, even when the targeted computer system is not connected to any network, researchers from Ben-Gurion University of the Negev in Israel stated in an academic paper describing the refinement of an existing attack.

The technique, called AirHopper, assumes that an attacker has already compromised the targeted system and desires to occasionally sneak out sensitive or classified data. Known as exfiltration, such occasional communication is difficult to maintain, because government technologists frequently separate the most sensitive systems from the public Internet for security. Known as an air gap, such a defensive measure makes it much more difficult for attackers to compromise systems or communicate with infected systems.

Yet, by using a program to create a radio signal using a computer’s video card—a technique known for more than a decade—and a smartphone capable of receiving FM signals, an attacker could collect data from air-gapped devices, a group of four researchers wrote in a paper presented last week at the IEEE 9th International Conference on Malicious and Unwanted Software (MALCON).

“Such technique can be used potentially by people and organizations with malicious intentions and we want to start a discussion on how to mitigate this newly presented risk,” Dudu Mimran, chief technology officer for the cyber security labs at Ben-Gurion University, said in a statement.

For the most part, the attack is a refinement of existing techniques. Intelligence agencies have long known—since at least 1985—that electromagnetic signals could be intercepted from computer monitors to reconstitute the information being displayed. Open-source projects have turned monitors into radio-frequency transmitters. And, from the information leaked by former contractor Edward Snowden, the National Security Agency appears to use radio-frequency devices implanted in various computer-system components to transmit information and exfiltrate data.

AirHopper uses off-the-shelf components, however, to achieve the same result. By using a smartphone with an FM receiver, the exfiltration technique can grab data from nearby systems and send it to a waiting attacker once the smartphone is again connected to a public network.

“This is the first time that a mobile phone is considered in an attack model as the intended receiver of maliciously crafted radio signals emitted from the screen of the isolated computer,” the group said in its statement on the research.

The technique works at a distance of 1 to 7 meters, but can only send data at very slow rates—less than 60 bytes per second, according to the researchers.

Friday, September 05. 2014

The Internet of Things is here and there -- but not everywhere yet

Via PCWorld

-----

The Internet of Things is still too hard. Even some of its biggest backers say so.

For all the long-term optimism at the M2M Evolution conference this week in Las Vegas, many vendors and analysts are starkly realistic about how far the vaunted set of technologies for connected objects still has to go. IoT is already saving money for some enterprises and boosting revenue for others, but it hasn’t hit the mainstream yet. That’s partly because it’s too complicated to deploy, some say.

For now, implementations, market growth and standards are mostly concentrated in specific sectors, according to several participants at the conference who would love to see IoT span the world.

Cisco Systems has estimated IoT will generate $14.4 trillion in economic value between last year and 2022. But Kevin Shatzkamer, a distinguished systems architect at Cisco, called IoT a misnomer, for now.

“I think we’re pretty far from envisioning this as an Internet,” Shatzkamer said. “Today, what we have is lots of sets of intranets.” Within enterprises, it’s mostly individual business units deploying IoT, in a pattern that echoes the adoption of cloud computing, he said.

In the past, most of the networked machines in factories, energy grids and other settings have been linked using custom-built, often local networks based on proprietary technologies. IoT links those connected machines to the Internet and lets organizations combine those data streams with others. It’s also expected to foster an industry that’s more like the Internet, with horizontal layers of technology and multivendor ecosystems of products.

What’s holding back the Internet of Things

The good news is that cities, utilities, and companies are getting more familiar with IoT and looking to use it. The less good news is that they’re talking about limited IoT rollouts for specific purposes.

“You can’t sell a platform, because a platform doesn’t solve a problem. A vertical solution solves a problem,” Shatzkamer said. “We’re stuck at this impasse of working toward the horizontal while building the vertical.”

“We’re no longer able to just go in and sort of bluff our way through a technology discussion of what’s possible,” said Rick Lisa, Intel’s group sales director for Global M2M. “They want to know what you can do for me today that solves a problem.”

One of the most cited examples of IoT’s potential is the so-called connected city, where myriad sensors and cameras will track the movement of people and resources and generate data to make everything run more efficiently and openly. But now, the key is to get one municipal project up and running to prove it can be done, Lisa said.

ThroughTek, based in China, used a connected fan to demonstrate an Internet of Things device management system at the M2M Evolution conference in Las Vegas this week.

The conference drew stories of many successful projects: A system for tracking construction gear has caught numerous workers on camera walking off with equipment and led to prosecutions. Sensors in taxis detect unsafe driving maneuvers and alert the driver with a tone and a seat vibration, then report it to the taxi company. Major League Baseball is collecting gigabytes of data about every moment in a game, providing more information for fans and teams.

But for the mass market of small and medium-size enterprises that don’t have the resources to do a lot of custom development, even targeted IoT rollouts are too daunting, said analyst James Brehm, founder of James Brehm & Associates.

There are software platforms that pave over some of the complexity of making various devices and applications talk to each other, such as the Omega DevCloud, which RacoWireless introduced on Tuesday. The DevCloud lets developers write applications in the language they know and make those apps work on almost any type of device in the field, RacoWireless said. Thingworx, Xively and Gemalto also offer software platforms that do some of the work for users. But the various platforms on offer from IoT specialist companies are still too fragmented for most customers, Brehm said. There are too many types of platforms—for device activation, device management, application development, and more. “The solutions are too complex.”

He thinks that’s holding back the industry’s growth. Though the past few years have seen rapid adoption in certain industries in certain countries, sometimes promoted by governments—energy in the U.K., transportation in Brazil, security cameras in China—the IoT industry as a whole is only growing by about 35 percent per year, Brehm estimates. That’s a healthy pace, but not the steep “hockey stick” growth that has made other Internet-driven technologies ubiquitous, he said.

What lies ahead

Brehm thinks IoT is in a period where customers are waiting for more complete toolkits to implement it—essentially off-the-shelf products—and the industry hasn’t consolidated enough to deliver them. More companies have to merge, and it’s not clear when that will happen, he said.

“I thought we’d be out of it by now,” Brehm said. What’s hard about consolidation is partly what’s hard about adoption, in that IoT is a complex set of technologies, he said.

And don’t count on industry standards to simplify everything. IoT’s scope is so broad that there’s no way one standard could define any part of it, analysts said. The industry is evolving too quickly for traditional standards processes, which are often mired in industry politics, to keep up, according to Andy Castonguay, an analyst at IoT research firm Machina.

Instead, individual industries will set their own standards while software platforms such as Omega DevCloud help to solve the broader fragmentation, Castonguay believes. Even the Industrial Internet Consortium, formed earlier this year to bring some coherence to IoT for conservative industries such as energy and aviation, plans to work with existing standards from specific industries rather than write its own.

Ryan Martin, an analyst at 451 Research, compared IoT standards to human languages.

“I’d be hard pressed to say we are going to have one universal language that everyone in the world can speak,” and even if there were one, most people would also speak a more local language, Martin said.

Friday, August 08. 2014

IBM Builds a Chip That Works Like Your Brain

Via re/code

-----

Can a silicon chip act like a human brain? Researchers at IBM say they’ve built one that mimics the brain better than any that has come before it.

In a paper published in the journal Science today, IBM said it used conventional silicon manufacturing techniques to create what it calls a neurosynaptic processor that could rival a traditional supercomputer by handling highly complex computations while consuming no more power than that supplied by a typical hearing aid battery.

The chip is also one of the biggest ever built, boasting some 5.4 billion transistors, which is about a billion more than the number of transistors on an Intel Xeon chip.

To do this, researchers designed the chip with a mesh network of 4,096 neurosynaptic cores. Each core contains elements that handle computing, memory and communicating with other parts of the chip. Each core operates in parallel with the others.

Multiple chips can be connected together seamlessly, IBM says, and they could be used to create a neurosynaptic supercomputer. The company even went so far as to build one using 16 of the chips.

The new design could shake up the conventional approach to computing, which has been more or less unchanged since the 1940s and is known as the Von Neumann architecture. In English, a Von Neumann computer — you’re using one right now — stores the data for a program in memory.

This chip, which has been dubbed TrueNorth, relies on its network of neurons to detect and recognize patterns in much the same way the human brain does. If you’ve read your Ray Kurzweil, this is one way to understand how the brain works — recognizing patterns. Put simply, once your brain knows the patterns associated with different parts of letters, it can string them together in order to recognize words and sentences. If Kurzweil is correct, you’re doing this right now, using some 300 million pattern-recognizing circuits in your brain’s neocortex.

The chip would seem to represent a breakthrough in one of the long-term problems in computing: Computers are really good at doing math and reading words, but discerning and understanding meaning and context, or recognizing and classifying objects — things that are easy for humans — have been difficult for traditional computers. One way IBM tested the chip was to see if it could detect people, cars, trucks and buses in video footage and correctly recognize them. It worked.

In terms of complexity, the TrueNorth chip has a million neurons, which is about the same number as in the brain of a common honeybee. A typical human brain averages 100 billion. But given time, the technology could be used to build computers that can not only see and hear, but understand what is going on around them.

Currently, the chip is capable of 46 billion synaptic operations per second per watt, or SOPS. That’s a tricky apples-to-oranges comparison to a traditional supercomputer, where performance is measured in the number of floating point operations per second, or FLOPS. But the most energy-efficient supercomputer now running tops out at 4.5 billion FLOPS.

Down the road, the researchers say in their paper, they foresee TrueNorth-like chips being combined with traditional systems, each solving problems it is best suited to handle. But it also means that systems that in some ways will rival the capabilities of current supercomputers will fit into a machine the size of your smartphone, while consuming even less energy.

The project was funded with money from DARPA, the Department of Defense’s research organization. IBM collaborated with researchers at Cornell Tech and iniLabs.

Tuesday, July 15. 2014

This Band Of Small Robots Could Build Entire Skyscrapers Without Human Help

Via Fast Company

-----

Even though most buildings are designed using the latest digital tools, actual construction is stuck in the past; building is messy, slow, and inefficient. 3-D printing might change that, but recent projects like these printed houses in China demonstrate one of the technical challenges--the equipment itself has to be gigantic, because it can’t work unless it’s bigger than the building itself.

A team of researchers from Institute for Advanced Architecture of Catalonia are working on another solution: A swarm of tiny robots that could cover the construction site of the future, quickly and cheaply building greener buildings of any size.

The robots work in teams to squirt out material that hardens into the shell of the building. Foundation robots move in a track, building up the first 20 layers of the structure, and then a series of "grip" robots clamp on the top or sides adding more layers, ceilings, and frames for windows or doors. Vacuum robots attach on at the end to add a layer to reinforce everything.

Other 3-D-printed architecture requires frames, which have to fit entirely around a building, or robotic arms that can only reach as high as themselves. “If you want to make an object as big as a stadium or a skyscraper you’ll need to design a machine bigger than that object in at least one axis,” explain researchers Petr Novikov and Sasa Jokic. “Making such machines isn’t economically reasonable, sustainable, and, in some cases, simply impossible due to their size.”

The Minibuilders could in theory build anything. “The robots can work simultaneously while performing different tasks, and having a fixed size they can create objects of virtually any scale, as far as material properties permit,” say Novikov and Jokic. “They are extremely easy to transport to the site. All these features make them incredibly efficient and reduce environmental footprint of construction.”

Because the technology wouldn't require custom molds or even support structures, there would be zero construction waste. It could also save materials by printing a little extra only in places where the building needs more support.

Eventually, the designers see the robots being used to take care of pretty much every conceivable construction task. "They are an ecology of small construction robots, and this ecology can be extended far beyond 3-D printing," the researchers say. "In the future, we envision robots that do also painting, piping, and variety of other tasks."

Novikov and Jokic, along with fellow researchers Shihui Jin, Stuart Maggs, Cristina Nan, Dori Sadan, are sharing their design plans to encourage others to build on it.

Monday, July 14. 2014

Inside Shapeways, the 3D-Printing Factory of the Future

Via Gizmodo

-----

When you walk into the Shapeways headquarters in a sprawling New York City warehouse building, it doesn't feel like a factory. It's something different, somehow unforgettable, inevitably new. As it should be. This is one of the world's first full service 3D-printing factories, and it's not like any factory I've ever seen.

Founded in the Netherlands in 2007 as a spinoff of Philips electronics, Shapeways is a truly unique and delightfully simple service. If you want an object 3D-printed, all you have to do is upload the design's CAD file to Shapeways' website, pay a fee that mostly just covers the cost of materials, and then wait. In a few days, Shapeways will send the 3D-printed object to you, nicely bubble-wrapped and ready for use. It's effectively an on-demand manufacturing service, a factory at your fingertips in a way that's wonderfully futuristic.

Aside from the windows that look on to the factory floor, Shapeways HQ looks just like any other start-up office. Colorful chairs surround laptop-littered desks. Employees drinking seltzer linger around a long lunch table in the back. It's oddly quiet, and everything is coated in a fine layer of white dust, the cast-off material that didn't quite make it into an object of its own.

If you didn't know any better, you'd think it was some sort of art studio littered with hulking machines, perhaps for firing pottery or something. In fact, each of these closet-sized machines costs upwards of $1 million and can 3D print about 100 objects at a time. Shapeways names all of them after old women because they require lots of care. The entire cast of Golden Girls is represented.

Expand

Expand

There's actually not much to see inside the machines. A small window offers a peek into the actual printing area, an unassuming expanse of white powder that lights up every few seconds. Shapeways uses selective laser sintering (SLS) printers that enable them to print many objects at once and product higher quality products than some other additive manufacturing techniques.

That white powder lingering everywhere is the raw material for a 3D-printed object. The box lights up because a series of lasers are actually sintering the plastic in specific spots, as dictated by the design. An arm then moves over the surface, adding another layer of powder. Over the course of several hours, the sintered plastic becomes an object that's supported by the excess powder. The process look almost surgical if you're not familiar with the specifics of exactly what's going on.

Expand

Expand

But, the printers don't just spit out objects ready to go. The finished product is actually a large white cube that's carefully moved from the machine to a nearby cooling rack. After all, it was just blasted with a bunch of hot lasers. Eventually, it's up to a human to break apart the cube and find dozens of newly printed objects in the powder. It's almost like digging for dinosaur bones. As Shapeways' Savannah Peterson explained to me, "You feel like an archaeologist even if you're just watching."

Expand

Expand

She's right. After I made my way around the factory floor, which is roughly half the size of a basketball court, I got a peek at this process. The guy doing the digging was wearing a protective jump suit and a large ventilator to keep from inhaling the powder. And despite the fact that large plastic curtains contained the breakout room, the powder gets everywhere. Suddenly, the light coating of dust that covers the whole factory made even more sense. By the end of the tour, I looked like a baker covered in flour.

That's about as messy as it gets, though. The rest of the process is remarkably clean and streamlined, yielding some pretty incredible objects made not only out of plastic but also vari. The Shapeways website is full of curiosities, from delicate jewelry that can be printed in sterling silver to physical manifestations of internet memes that are printed in color using a special printer that can handle rainbow hues.

Tuesday, July 08. 2014

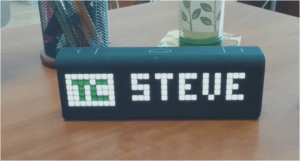

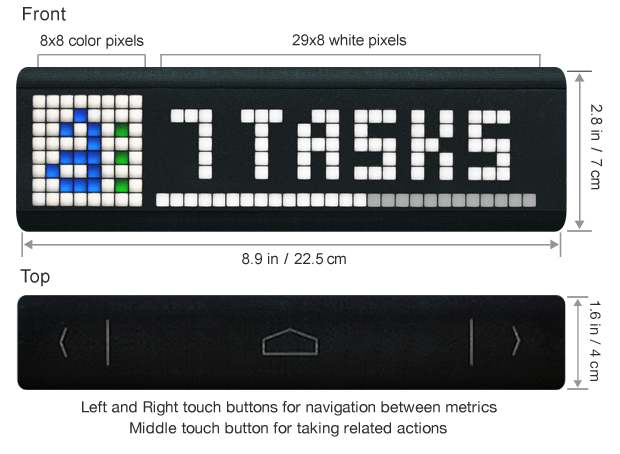

LaMetric Is A Smart And Hackable Ticker Display

Via TechCrunch

-----

Ever since covering Fliike, a beautifully-designed physical ‘Like’ counter for local businesses, I’ve been thinking about how the idea could be extended, with a fully-programmable, but simple, ticker-style Internet-connected display.

A few products along those lines do already exist, but I’ve yet to find anything that quite matches what I had in mind. That is, until recently, when I was introduced to LaMetric, a smart ticker being developed by UK/Ukraine Internet of Things (IoT) startup Smart Atoms.

Launching its Kickstarter crowdfunding campaign today, the LaMetric is aimed at both consumers and businesses. The idea is you may want to display alerts, notifications and other information from your online “life” via an elegant desktop or wall-mountable and glance-able display. Likewise, businesses that want an Internet-connected ticker, displaying various business information, either publicly for customers or in an office, are also a target market.

The device itself has a retro, 8-bit style desktop clock feel to it, thanks to its ‘blocky’ LED light powered display, which is part of its charm. The display can output one icon and seven numbers, and is scrollable.

But, best of all, the LaMetric is fully programmable via the accompanying app (or “hackable”) and comes with a bunch of off-the-shelf widgets, along with support for RSS and services like IFTTT, Smart Things, Wig Wag, Ninja Blocks, so you can get it talking to other smart devices or web services. Seriously, this thing goes way beyond what I had in mind — try the simulator for yourself — and, for an IoT junkie like me, is just damn cool.

Examples of the kind of things you can track with the device include time, weather, subject and time left till your next meeting, number of new emails and their subject lines, CrossFit timings and fitness goals, number of to-dos for today, stock quotes, and social network notifications.

Or for businesses, this might include Facebook Likes, website visitors, conversions and other metrics, app store rankings, downloads, and revenue.

In addition to the display, the device has back and forward buttons so you can rotate widgets (though these can be set to automatically rotate), as well as an enter key for programmed responses, such as accepting a calendar invitation.

There’s also a loudspeaker for audio alerts. The LaMetric is powered by micro-USB and also comes as an optional and more expensive battery-powered version.

Early-bird backers on Kickstarter can pick up the LaMetric for as little as $89 (plus shipping) for the battery-less version, with countless other options and perks, increasing in price.

Monday, June 23. 2014

Metaio unveils Thermal Touch technology for making user interfaces out of thin air

Via GIGAOM

-----

User interfaces present one of the most interesting quandaries of modern computing: we’ve moved from big monitors and keyboards to touchscreens, but now we’re heading into a world of connected everyday objects and wearable computing — how will we interact with those? Metaio, the German augmented reality outfit, has an idea.

Augmented reality (AR) involves overlaying virtual imagery and information on top of the real world — you may be familiar with the concept of viewing a magazine page through your phone’s camera and seeing a static ad come to life. Metaio has come up with a way of creating a user interface on pretty much any surface, by combining traditional camera-driven AR with thermal imaging.

Essentially, what Metaio is demonstrating with its new “Thermal Touch” interface concept is an alternative to what a touchscreen does when you touch it — there, capacitive sensors know you’ve touched a certain part because they can sense the electrical charge in your finger; here, an infrared camera senses the residual heat left by your finger. So, for example, you could use smart glass to view a virtual chess board on an empty table, then actually play chess on it:

“Our R&D department had a few thermal cameras that they’d just received and kind of on a whim they started playing around,” Metaio spokesman Trak Lord told me. “One researcher noticed that every time he touched something, it left a very visible heat signature imprint.”

To be clear, a normal camera can do a lot of tracking if it has sufficiently powerful brains behind it – some of the theoretical applications shown off by Metaio on Thursday may be partly achievable without yet another sensor for your tablet or smart glass or whatever. But there’s a limit to what normal cameras can do when it comes to tracking interaction with three-dimensional surfaces. As Lord put it, “the thermal camera adds another dimension of understanding. If you have a [normal] camera it’s not as precise. The thermal imaging camera can very clearly see where exactly you’re touching.”

Metaio has a bunch of fascinating use cases to hand: security keypads that only the user can see; newspaper ads with clickable links; interactive car manuals that show you what you need to know about a component when you touch it. But right now this is just R&D – nobody is putting thermal imaging cameras into their smartphones and wearables just yet, and Lord reckons it will take at least 5 years before this sort of thing comes to market, if it ever does.

For now, this is the equipment needed to realize the concept:

Still, when modern mobile devices are already packing tons of sensors, why not throw in another if it can turn anything into a user interface? Here’s Metaio’s video, showing what Thermal Touch could do:

Monday, April 28. 2014

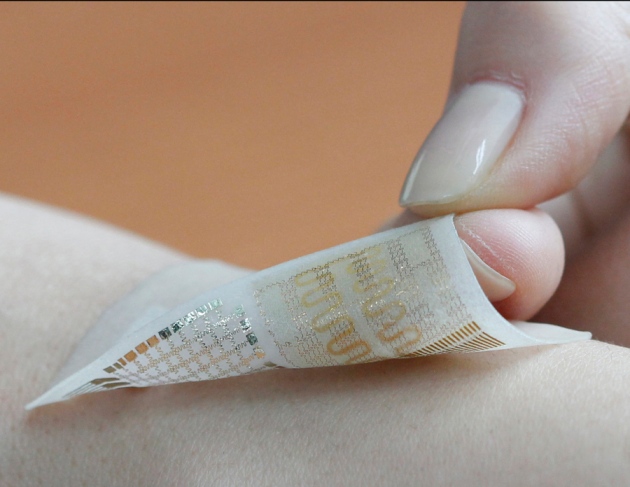

‘Electronic skin' equipped with memory

Via Nature

-----

Donghee Son and Jongha Lee - Wearable sensors have until now been unable to store data locally.

Researchers have created a wearable device that is as thin as a temporary tattoo and can store and transmit data about a person’s movements, receive diagnostic information and release drugs into skin.

Similar efforts to develop ‘electronic skin’ abound, but the device is the first that can store information and also deliver medicine — combining patient treatment and monitoring. Its creators, who report their findings today in Nature Nanotechnology1, say that the technology could one day aid patients with movement disorders such as Parkinson’s disease or epilepsy.

The researchers constructed the device by layering a package of stretchable nanomaterials — sensors that detect temperature and motion, resistive RAM for data storage, microheaters and drugs — onto a material that mimics the softness and flexibility of the skin. The result was a sticky patch containing a device roughly 4 centimetres long, 2 cm wide and 0.3 millimetres thick, says study co-author Nanshu Lu, a mechanical engineer at the University of Texas in Austin.

“The novelty is really in the integration of the memory device,” says Stéphanie Lacour, an engineer at the Swiss Federal Institute of Technology in Lausanne, who was not involved in the work. No other device can store data locally, she adds.

The trade-off for that memory milestone is that the device works only if it is connected to a power supply and data transmitter, both of which need to be made similarly compact and flexible before the prototype can be used routinely in patients. Although some commercially available components, such as lithium batteries and radio-frequency identification tags, can do this work, they are too rigid for the soft-as-skin brand of electronic device, Lu says.

Even if softer components were available, data transmitted wirelessly would need to be converted into a readable digital format, and the signal might need to be amplified. “It’s a pretty complicated system to integrate onto a piece of tattoo material,” she says. “It’s still pretty far away.”

Quicksearch

Popular Entries

- The great Ars Android interface shootout (131388)

- Norton cyber crime study offers striking revenue loss statistics (102198)

- MeCam $49 flying camera concept follows you around, streams video to your phone (100406)

- Norton cyber crime study offers striking revenue loss statistics (58427)

- The PC inside your phone: A guide to the system-on-a-chip (58238)

Categories

Show tagged entries

Syndicate This Blog

Calendar

|

|

June '26 | |||||

| Mon | Tue | Wed | Thu | Fri | Sat | Sun |

| 1 | 2 | 3 | 4 | 5 | 6 | 7 |

| 8 | 9 | 10 | 11 | 12 | 13 | 14 |

| 15 | 16 | 17 | 18 | 19 | 20 | 21 |

| 22 | 23 | 24 | 25 | 26 | 27 | 28 |

| 29 | 30 | |||||