Entries tagged as desktop

Related tags

gui data visualisation hardware innovation&society interface laptop os software tablet technology touch api display game google interaction mobile mouse network programming sdk tv android chrome os history homeos linux microsoft mobile phone usb web 3d 3d printing advertisements ai algorythm amazon API app app store apple ar army art artificial intelligence augmented reality big data botnet browser car hybrid phone remote display wifi 3g camera chrome cloud cpu cray crowd-sourcing data centerThursday, February 02. 2012

Introducing the HUD. Say hello to the future of the menu.

-----

The desktop remains central to our everyday work and play, despite all the excitement around tablets, TV’s and phones. So it’s exciting for us to innovate in the desktop too, especially when we find ways to enhance the experience of both heavy “power” users and casual users at the same time. The desktop will be with us for a long time, and for those of us who spend hours every day using a wide diversity of applications, here is some very good news: 12.04 LTS will include the first step in a major new approach to application interfaces.

This work grows out of observations of new and established / sophisticated users making extensive use of the broader set of capabilities in their applications. We noticed that both groups of users spent a lot of time, relatively speaking, navigating the menus of their applications, either to learn about the capabilities of the app, or to take a specific action. We were also conscious of the broader theme in Unity design of leading from user intent. And that set us on a course which lead to today’s first public milestone on what we expect will be a long, fruitful and exciting journey.

The menu has been a central part of the GUI since Xerox PARC invented ‘em in the 70?s. It’s the M in WIMP and has been there, essentially unchanged, for 30 years.

We can do much better!

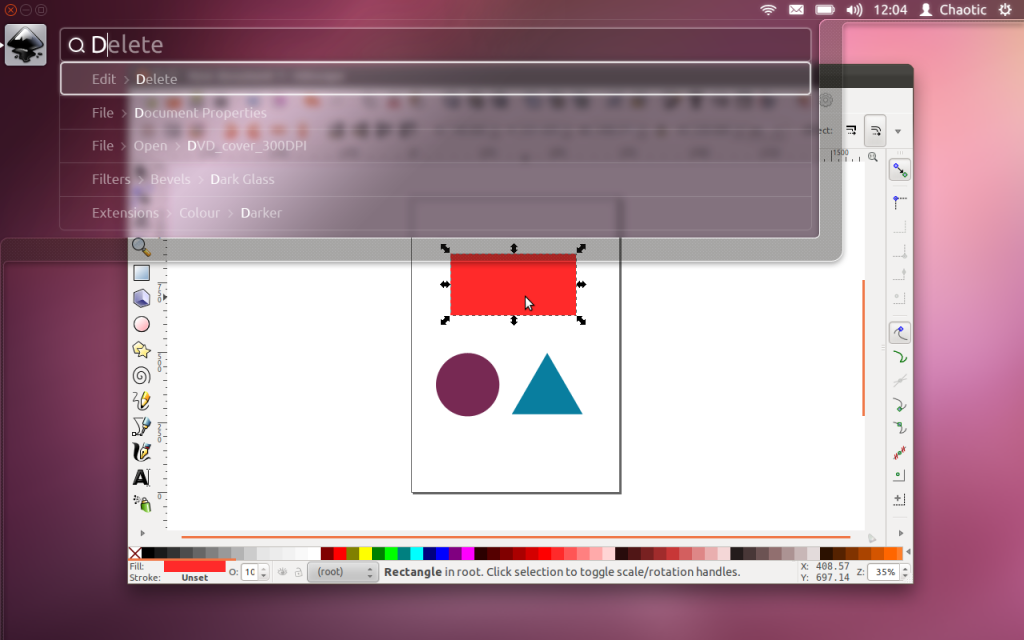

Say hello to the Head-Up Display, or HUD, which will ultimately replace menus in Unity applications. Here’s what we hope you’ll see in 12.04 when you invoke the HUD from any standard Ubuntu app that supports the global menu:

The intenterface – it maps your intent to the interface

This is the HUD. It’s a way for you to express your intent and have the application respond appropriately. We think of it as “beyond interface”, it’s the “intenterface”. This concept of “intent-driven interface” has been a primary theme of our work in the Unity shell, with dash search as a first class experience pioneered in Unity. Now we are bringing the same vision to the application, in a way which is completely compatible with existing applications and menus.

The HUD concept has been the driver for all the work we’ve done in unifying menu systems across Gtk, Qt and other toolkit apps in the past two years. So far, that’s shown up as the global menu. In 12.04, it also gives us the first cut of the HUD.

Menus serve two purposes. They act as a standard way to invoke commands which are too infrequently used to warrant a dedicated piece of UI real-estate, like a toolbar button, and they serve as a map of the app’s functionality, almost like a table of contents that one can scan to get a feel for ‘what the app does’. It’s command invocation that we think can be improved upon, and that’s where we are focusing our design exploration.

As a means of invoking commands, menus have some advantages. They are always in the same place (top of the window or screen). They are organised in a way that’s quite easy to describe over the phone, or in a text book (“click the Edit->Preferences menu”), they are pretty fast to read since they are generally arranged in tight vertical columns. They also have some disadvantages: when they get nested, navigating the tree can become fragile. They require you to read a lot when you probably already know what you want. They are more difficult to use from the keyboard than they should be, since they generally require you to remember something special (hotkeys) or use a very limited subset of the keyboard (arrow navigation). They force developers to make often arbitrary choices about the menu tree (“should Preferences be in Edit or in Tools or in Options?”), and then they force users to make equally arbitrary effort to memorise and navigate that tree.

The HUD solves many of these issues, by connecting users directly to what they want. Check out the video, based on a current prototype. It’s a “vocabulary UI”, or VUI, and closer to the way users think. “I told the application to…” is common user paraphrasing for “I clicked the menu to…”. The tree is no longer important, what’s important is the efficiency of the match between what the user says, and the commands we offer up for invocation.

In 12.04 LTS, the HUD is a smart look-ahead search through the app and system (indicator) menus. The image is showing Inkscape, but of course it works everywhere the global menu works. No app modifications are needed to get this level of experience. And you don’t have to adopt the HUD immediately, it’s there if you want it, supplementing the existing menu mechanism.

It’s smart, because it can do things like fuzzy matching, and it can learn what you usually do so it can prioritise the things you use often. It covers the focused app (because that’s where you probably want to act) as well as system functionality; you can change IM state, or go offline in Skype, all through the HUD, without changing focus, because those apps all talk to the indicator system. When you’ve been using it for a little while it seems like it’s reading your mind, in a good way.

We’ll resurrect the (boring) old ways of displaying the menu in 12.04, in the app and in the panel. In the past few releases of Ubuntu, we’ve actively diminished the visual presence of menus in anticipation of this landing. That proved controversial. In our defence, in user testing, every user finds the menu in the panel, every time, and it’s obviously a cleaner presentation of the interface. But hiding the menu before we had the replacement was overly aggressive. If the HUD lands in 12.04 LTS, we hope you’ll find yourself using the menu less and less, and be glad to have it hidden when you are not using it. You’ll definitely have that option, alongside more traditional menu styles.

Voice is the natural next step

Searching is fast and familiar, especially once we integrate voice recognition, gesture and touch. We want to make it easy to talk to any application, and for any application to respond to your voice. The full integration of voice into applications will take some time. We can start by mapping voice onto the existing menu structures of your apps. And it will only get better from there.

But even without voice input, the HUD is faster than mousing through a menu, and easier to use than hotkeys since you just have to know what you want, not remember a specific key combination. We can search through everything we know about the menu, including descriptive help text, so pretty soon you will be able to find a menu entry using only vaguely related text (imagine finding an entry called Preferences when you search for “settings”).

There is lots to discover, refine and implement. I have a feeling this will be a lot of fun in the next two years

Even better for the power user

The results so far are rather interesting: power users say things

like “every GUI app now feels as powerful as VIM”. EMACS users just

grunt and… nevermind  . Another comment was “it works so well that the rare occasions when it

can’t read my mind are annoying!”. We’re doing a lot of user testing on

heavy multitaskers, developers and all-day-at-the-workstation personas

for Unity in 12.04, polishing off loose ends in the experience that

frustrated some in this audience in 11.04-10. If that describes you, the

results should be delightful. And the HUD should be particularly

empowering.

. Another comment was “it works so well that the rare occasions when it

can’t read my mind are annoying!”. We’re doing a lot of user testing on

heavy multitaskers, developers and all-day-at-the-workstation personas

for Unity in 12.04, polishing off loose ends in the experience that

frustrated some in this audience in 11.04-10. If that describes you, the

results should be delightful. And the HUD should be particularly

empowering.

Even casual users find typing faster than mousing. So while there are modes of interaction where it’s nice to sit back and drive around with the mouse, we observe people staying more engaged and more focused on their task when they can keep their hands on the keyboard all the time. Hotkeys are a sort of mental gymnastics, the HUD is a continuation of mental flow.

Ahead of the competition

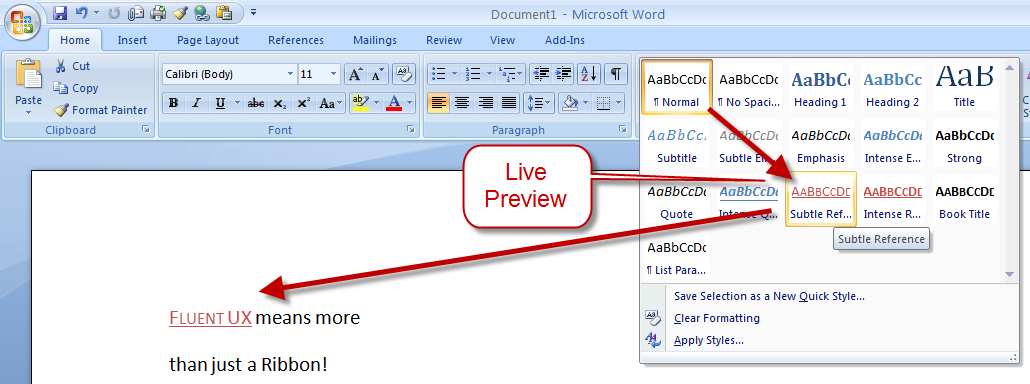

There are other teams interested in a similar problem space. Perhaps the best-known new alternative to the traditional menu is Microsoft’s Ribbon. Introduced first as part of a series of changes called Fluent UX in Office, the ribbon is now making its way to a wider set of Windows components and applications. It looks like this:

You can read about the ribbon from a supporter (like any UX change, it has its supporters and detractors  ) and if you’ve used it yourself, you will have your own opinion about

it. The ribbon is highly visual, making options and commands very

visible. It is however also a hog of space (I’m told it can be

minimised). Our goal in much of the Unity design has been to return

screen real estate to the content with which the user is working; the

HUD meets that goal by appearing only when invoked.

) and if you’ve used it yourself, you will have your own opinion about

it. The ribbon is highly visual, making options and commands very

visible. It is however also a hog of space (I’m told it can be

minimised). Our goal in much of the Unity design has been to return

screen real estate to the content with which the user is working; the

HUD meets that goal by appearing only when invoked.

Instead of cluttering up the interface ALL the time, let’s clear out the chrome, and show users just what they want, when they want it.

Time will tell whether users prefer the ribbon, or the HUD, but we think it’s exciting enough to pursue and invest in, both in R&D and in supporting developers who want to take advantage of it.

Other relevant efforts include Enso and Ubiquity from the original Humanized team (hi Aza &co), then at Mozilla.

Our thinking is inspired by many works of science, art and entertainment; from Minority Report to Modern Warfare and Jef Raskin’s Humane Interface. We hope others will join us and accelerate the shift from pointy-clicky interfaces to natural and efficient ones.

Roadmap for the HUD

There’s still a lot of design and code still to do. For a start, we haven’t addressed the secondary aspect of the menu, as a visible map of the functionality in an app. That discoverability is of course entirely absent from the HUD; the old menu is still there for now, but we’d like to replace it altogether not just supplement it. And all the other patterns of interaction we expect in the HUD remain to be explored. Regardless, there is a great team working on this, including folk who understand Gtk and Qt such as Ted Gould, Ryan Lortie, Gord Allott and Aurelien Gateau, as well as designers Xi Zhu, Otto Greenslade, Oren Horev and John Lea. Thanks to all of them for getting this initial work to the point where we are confident it’s worthwhile for others to invest time in.

We’ll make sure it’s easy for developers working in any toolkit to take advantage of this and give their users a better experience. And we’ll promote the apps which do it best – it makes apps easier to use, it saves time and screen real-estate for users, and it creates a better impression of the free software platform when it’s done well.

From a code quality and testing perspective, even though we consider this first cut a prototype-grown-up, folk will be glad to see this:

Overall coverage rate: lines......: 87.1% (948 of 1089 lines) functions..: 97.7% (84 of 86 functions) branches...: 63.0% (407 of 646 branches)

Landing in 12.04 LTS is gated on more widespread testing. You can of course try this out from a PPA or branch the code in Launchpad (you will need these two branches). Or dig deeper with blogs on the topic from Ted Gould, Olli Ries and Gord Allott. Welcome to 2012 everybody!

Thursday, September 01. 2011

Microsoft explains Windows 8 dual-interface design

Via SlashGear

-----

Microsoft Windows chief Steven Sinofsky has taken to the Building Windows 8 blog to explain the company’s decision to keep two interfaces: the traditional desktop UI and the more tablet-friendly Metro UI. His explanation seemed to be in response to criticism and confusion after the latest details were revealed on the new Windows 8 Explorer interface.

On Monday, details on the Windows 8 Explorer file manager interface were revealed showing what looked to be a very traditional Windows UI without any Metro elements. Reactions were mixed with many confused as to what direction Microsoft was heading with its Windows 8 interface. Well, Sinofsky is attempting to answer that and says that it is a “balancing act” of trying to get both interfaces working together harmoniously.

Sinofsky writes in his post:

Some of you are probably wondering how these parts work together to create a harmonious experience. Are there two user interfaces? Why not move on to a Metro style experience everywhere? On the other hand, others have been suggesting that Metro is only for tablets and touch, and we should avoid “dumbing down” Windows 8 with that design.

He proceeds to address each of these concerns, saying that the fluid and intuitive Metro interface is great on the tablet form factor, but when it comes down to getting serious work done, precision mouse and keyboard tools are still needed as well as the ability to run traditional applications. Hence, he explains that in the end they decided to bring the best of both worlds together for Windows 8.

With Windows 8 on a tablet, users can fully immerse themselves in the Metro UI and never see the desktop interface. In fact, the code for the desktop interface won’t even load. But, if the user needs to use the desktop interface, they can do so without needing to switch over to a laptop or other secondary device just for business or work.

A more detailed preview of Windows 8 is expected to take place during Microsoft’s Build developer conference in September. It’s been rumored that the first betas may be distributed to developers then along with a Windows 8 compatible hardware giveaway.

-----

Personal comments:

In order to complete the so-called 'Desktop Crisis' discussion, the point of view of Microsoft who has decided to avoid mixing functionalities between desktop's GUI and tablet's GUI.

The Subjectivity of Natural Scrolling

Monday, August 01. 2011

Switched On: Desktop divergence

Via Engadget

By Ross Rubin

-----

Last week's Switched On discussed

how Lion's feature set could be perceived differently by new users or

those coming from an iPad versus those who have used Macs for some time,

while a previous Switched On discussed

how Microsoft is preparing for a similar transition in Windows 8. Both

OS X Lion and Windows 8 seek to mix elements of a tablet UI with

elements of a desktop UI or -- putting it another way -- a

finger-friendly touch interface with a mouse-driven interface. If Apple

and Microsoft could wave a wand and magically have all apps adopt

overnight so they could leave a keyboard and mouse behind, they probably

would. Since they can't, though, inconsistency prevails.

Yet, while the OS X-iOS mashup that is Lion

exhibits is share of growing pains, the fall-off effect isn't as

pronounced as it appears it will be for Windows 8. The main reasons for

this are, in order of increasing importance, legacy, hardware, and

Metro.

Legacy. Microsoft has an incredibly strong commitment

to backward compatibility. As long as Microsoft supports older Windows

apps (which will be well into the future), there will be a more

pronounced gap between that old user interface and the new. This will

likely become more of a difference between Microsoft and Apple over

time. For now, however, Apple is also treading lightly, and several of

Lion's user interface changes -- including "natural" scrolling

directions, Dashboard as a space, and the hiding of the hard drive on

the desktop -- can be reversed. Even some of Lion's "full-screen" apps

are only a cursor movement away from revealing their menus.

Hardware. As Apple continues to keep touchscreens off

the Mac, it brings over the look but not the input experience of iPad

apps, relying instead on the precision of a mouse or trackpad.

Therefore, these Mac apps do not have to embrace finger-friendliness. In

contrast, the "tablet" UI of Windows 8 is designed for fingertips and

therefore demands a cleaner break with an interface designed for mice

(although Microsoft preserves pointer control as well so these apps can

be used on PCs without touchscreens).

Metro. A late entrant to the gesture-driven touchscreen

handset wars, Microsoft sought to differentiate Windows Phone 7 with

its panoramic user interface. When Joe Belfiore introduced

Windows Phone 7 at Mobile World Congress in 2010, he repeatedly noted

that "the phone is not a PC." That's an accurate assessment, and perhaps

one worth repeating in light of all the feedback

Microsoft ignored over the years in the design of Pocket PC and Windows

Mobile. It also of couse holds true beond the user interface for design

around context and support of location-based services.

But now that the folks in Redmond have created an enjoyable phone

interface, have things actually changed? Was it true only that the phone

and PC shoud not have the same old Windows interface, or is it also

still true that the PC and phone should not have the same new Windows

Phone interface? Was it the nature of the user interface itself that was

at fault, or the notion of the same user interface across PC and phone

regardless of how good it is?

There is certainly room for more consistency across PCs, tablets and

handsets. However, Microsoft did not just differentiate Windows Phone 7

from iOS and Android, it differentiated it from Windows as well. And that

is the main reason why the shift in context between a classic Windows

app and a "tablet" Windows 8 app seems more striking at this point than

the difference between a classic Mac app and "full screen" Lion app.

Lion's full-screen apps could be the new point of crossover with Windows

8's "tablet" user interface mode. Based on what we've seen on the

handset side, it is certainly possible for developers to write the same

apps for the iPhone and Windows Phone 7, but these are generally simpler

apps (and then there are games, which generally ignore most user

interface conventions anyway).

Apple and Microsoft are both clearly striving for a simpler user

experience, but Microsoft is also trying to adapt its desktop OS to a

new form factor in the process of doing so. The balancing act for both

companies will be making their new combinations of software and hardware

(from partners in the case of Microsoft) embrace a new generation of

users while minimizing alienation for the existing one.

-----

Personal comments:

See also this article

Tuesday, July 19. 2011

Is the Desktop Having an Identity Crisis?

Both Apple and Microsoft's new desktop operating systems borrow elements from mobile devices, in sometimes confusing ways.

Apple is widely expected to unveil a major update this week to OS X Lion, its operating system for desktop and laptop computers. Microsoft, meanwhile, is working on an even bigger overhaul of Windows, with a version called Windows 8.

Both new operating systems reflect a tectonic shift in personal computing. They incorporate elements from mobile operating systems alongside more conventional desktop features. But demos of both operating systems suggest that users could face a confusing mishmash of design ideas and interaction methods.

Windows 8 and OS X Lion include elements such as touch interaction and full-screen apps that will facilitate the kind of "unitasking" (as opposed to multitasking) that users have become accustomed to on mobile devices and tablets.

"The rise of the tablets, or at least the iPad, has suggested that there is a latent, unmet need for a new form of computing," says Peter Merholz, president of the user-experience and design firm Adaptive Path. However, he adds, "moving PCs in a tablet direction isn't necessarily sensible."

Cathy Shive, an independent software developer, would agree. She developed software for Mac desktop applications for six years before she switched and began developing for iOS (Apple's operating system for the iPhone and iPad). "When I first saw Steve Jobs's demo of Lion, I was really surprised—I was appalled, actually," she says.

Shive is surprised by the direction both Apple and Microsoft are taking. One fundamental dictate of usability design is that an interface should be tailored to the specific context—and hardware—in which it lives. A desktop PC is not the same thing as a tablet or a mobile device, yet in that initial demo, "It seemed like what [Jobs] was showing us was a giant iPad," says Shive.

A subsequent demonstration of Windows 8 by Microsoft vice president Julie Larson-Green confirmed that Redmond was also moving toward touch as a dominant interaction mechanism. One of the devices used in that demonstration, a "media tablet" from Taiwan-based ASUS, resembled an LCD monitor with no keyboard.

Not everyone is so skeptical about Apple and Microsoft's plans. Lukas Mathis, a programmer and usability expert, thinks that, on balance, this shift is a good thing. "If you watch casual PC users interact with their computers, you'll quickly notice that the mouse is a lot harder to use than we think," he says. "I'm glad to see finger-friendly, large user interface elements from phones and tablets make their way into desktop operating systems. This change was desperately needed, and I was very happy to see it."

Mathis argues that experienced PC users don't realize how crowded with "small buttons, unclear icons, and tiny text labels" typical desktop operating systems are.

Lion and Windows 8 solve these problems in slightly different ways. In Lion, file management is moving toward an iPhone/iPad-style model, where users launch applications from a "Launchpad," and their files are accessible from within those applications. In Windows 8, files, along with applications, bookmarks, and just about anything else, can be made accessible from a customizable start screen.

Some have criticized Mission Control, Apple's new centralized app and window management interface, saying that it adds complexity rather than introducing the simplicity of a mobile interface. At the other extreme, Lion allows any app to be rendered full-screen, which blocks out distractions but also forces users to switch applications more often than necessary.

"The problem [with a desktop OS] is that it's hard to manage windows," says Mathis. "The solution isn't to just remove windows altogether; the solution is to fix window management so it's easier to use, but still allows you to, say, write an essay in one window, but at the same time look at a source for your essay in a different window."

Windows 8, meanwhile, attempts to solve this problem in a more elegant way, with a "Windows Snap," which allows apps to be viewed side-by-side while eliminating the need to manage their dimensions by dragging them from the corner.

A problem with moving toward a touch-centric interface is that the mouse is absolutely necessary for certain professional applications. "I can't imagine touch in Microsoft Excel," says Shive. "That's going to be terrible," she says.

The most significant difference between Apple's approach and Microsoft's is that Windows 8 will be the same OS no matter what device it's on, from a mobile phone to a desktop PC. To accommodate a range of devices, Microsoft has left intact the original Windows interface, which users can switch to from the full-screen start screen and full-screen apps option.

Merholz believes Microsoft's attempt to make its interface consistent across all devices may be a mistake. "Microsoft has a history of overemphasizing the value of 'Windows everywhere.' There's a fear they haven't learned appropriateness, given the device and its context," he says.

Shive believes the same could be said of Apple. "Apple has been seduced by their own success, and they're jumping to translate that over to the desktop ... They think there's some kind of shortcut, where everyone is loving this interface on the mobile device, so they will love it on their desktop as well," she says.

In a sense, both Apple and Microsoft are about to embark on a beta test of what the PC should be like in an era when consumers are increasingly accustomed to post-PC modes of interaction. But it could be a bumpy process. "I think we can get there, but we've been using the desktop interface for 30 years now, and it's not going to happen overnight," says Shive.

-----

Personal Comments:

From my personal point of view and based on my 30 years IT/Dev experience, I do not see the change of desktop Look&Feel as a crisis but more as a simple and efficient aesthetic evolution.

Why? Because what was made for mobile phone first and then for new coming mobile devices like tablets is what some people were trying to do on laptop/desktop computer's GUI for years: trying to make the GUI/desktop experience simple enough in order to make computers accessible to anyone of us, even to the more recusant to technology (see evolution of windows and Linux GUI). That specific goal was successfully reached on mobile phones/devices in a very short time, pushing common people to change of device every two years and making them enjoy new functionality/technology without having to read one single page of an instruction manual (by the way, mobile phones are delivered without any!).

It looks like technological constraints and restrictions were needed in order to invent this kind of interface. Touch screen only mobile phones were available since years prior Apple produces its first iPhone (2007), remember the Sony-Ericsson P800 (2002) and its successor the P900 (2003), technically everything was here (they are close to the "classic" smartphone we are used to have in our pocket nowadays), but an efficient GUI and in a more general way, an efficient OS was dramatically missing. What was done by Apple with iOS, Google with Android and HTC with its UISense GUI on top of Android brings out and demonstrates the obvious potential of these mobile devices.

The adaptation of these GUI/OS on tablet (iOS, Android 3.0), still with the touch-only constraint, rises up new solutions for GUI while extending what can be done through few basic finger gestures. It sounds not surprising that classic desktop/laptop computers are now trying to integrate the good of all this in their own environment as they did not succeed in doing so on their own before. I would even say that this is an obvious step forward as many ideas are adaptable to desktop computer world. For example, making easier the installation of applications by making available App store concept to desktop computers is an obvious step, one does not have to think if the application is compatible with the local hardware etc... the App store just focus on compatible applications, seamlessly.

So more than entering a crisis/revolution, I would say that desktop computer world will just exploit from the mobile devices world what can be adapted in order to make the desktop computer experience for the end-user as seamless as it is on mobile devices... but for some basic tasks only.

You can embed a desktop OS in a very

nice and simple box making things looking very similar to mobile

device's simplicity, but this is just a kind of gift package which is

not valuable for all usages you can face on a desktop computer...

making this step forward looking like a set of cosmetic changes, and not more... because it just can not be more!

Today, one is used to glorious declaration each time a new OS is proposed to end-user, many so-called "new" features mentioned are not more than already existing ones that were re-design and pushed on the scene in order to obtain a kind of revolutionary OS impression: who can seriously consider full screen app or automatic save as new key features for a 21th century's new OS?

Let's go through some of the key new features announced by Apple in Mac OS X Lion:

- Multi-touch: this is not a new feature, it "just" adds some new functionality to map to already available multi-touch gestures.

- Full Screen management: it basically attached a virtual desktop to any application running in full screen. Thus, you can switch from/to full screen applications... the same way you were already able to do so by switching from one virtual desktop to another.

- LaunchPad: this is basically a graphical interface/shortcuts for the 'Applications' folder in the Finder. Ok it looks like the Apps grid on a tablet or a mobile phone... but as it was already presented as a list, the other option was... guess what... a grid!

- Mission Control: this is also an evolution of something that was already existing. The ability to see all your windows in addition to all your virtual desktops.

I'm pretty convinced that these new features are going to be really useful and pleasant to use, making the usage of the touchpad on MacBook even more primordial, but I do not see here a real revolution, neither a crisis, in the way we are going to work on desktop/laptop computers.

Quicksearch

Popular Entries

- The great Ars Android interface shootout (131367)

- Norton cyber crime study offers striking revenue loss statistics (102170)

- MeCam $49 flying camera concept follows you around, streams video to your phone (100365)

- Norton cyber crime study offers striking revenue loss statistics (58399)

- The PC inside your phone: A guide to the system-on-a-chip (58162)

Categories

Show tagged entries

Syndicate This Blog

Calendar

|

|

May '26 | |||||

| Mon | Tue | Wed | Thu | Fri | Sat | Sun |

| 1 | 2 | 3 | ||||

| 4 | 5 | 6 | 7 | 8 | 9 | 10 |

| 11 | 12 | 13 | 14 | 15 | 16 | 17 |

| 18 | 19 | 20 | 21 | 22 | 23 | 24 |

| 25 | 26 | 27 | 28 | 29 | 30 | 31 |