Entries tagged as software

Related tags

3d camera flash game hardware headset history mobile mobile phone technology tracking virtual reality web wiki www 3d printing 3d scanner crowd-sourcing diy evolution facial copy food innovation&society medecin microsoft physical computing piracy programming rapid prototyping recycling robot virus advertisements ai algorythm android apple arduino automation data mining data visualisation network neural network sensors siri artificial intelligence big data cloud computing coding fft program amazon cloud ebook google kindle ad app htc ios linux os sdk super collider tablet usb API facial recognition glass interface mirror windows 8 app store open source iphone ar augmented reality army drone art car privacy super computer botnet security browser chrome firefox ieWednesday, April 25. 2012

PlayThru offers playful captcha alternative

Via übergizmo

-----

Don’t you just hate it when you often need to solve a captcha whenever you want to log in to select websites? You know, those irritating slanted and jumbled group of letters and numbers, where sometimes, you cannot even tell whether it is the letter ‘o’ or the number ’0?, or if the particular letter is in the uppercase or not. Captchas have been employed for some years already in order to verify that the person behind the computer is made out of flesh and bone, and is not an automated robot or program of any kind. Detroit-based tech company Are You A Human (interesting name) has come up with a different way of verifying the authenticity of a user – not through captchas, but rather, the idea of a simple game known as PlayThru.

PlayThru claims to prevent bots from spamming sites, as the game can only be completed by an actual human being. Definitely sounds far more fun in theory to “solve”, and if your less than informed boss walks by your desk to see you play the latest game, just tell him or her that you are solving a captcha replacement before you are able to start work.

To get a better idea on how PlayThru works, here is an example of just one of the games. You will be presented with your fair share of items, including a shoe, a football jersey, an olive and a piece of bacon, where all of them will float right beside a pizza. Should you drag the right ingredients over the pizza, then you would have “won”, and so far, I do not think that anyone would like a topping of shoes on their pizza.

Friday, March 30. 2012

Fake ID holders beware: facial recognition service Face.com can now detect your age

Via VB

-----

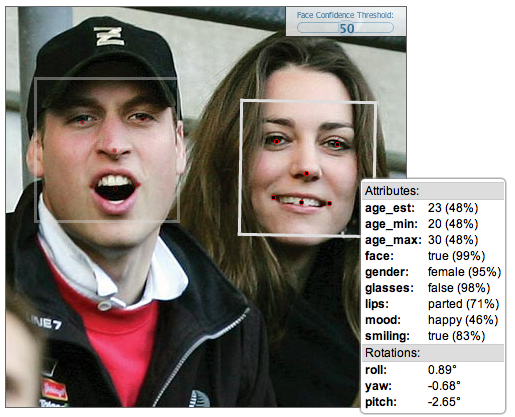

Facial-recognition platform Face.com could foil the plans of all those under-age kids looking to score some booze. Fake IDs might not fool anyone for much longer, because Face.com claims its new application programming interface (API) can be used to detect a person’s age by scanning a photo.

With its facial recognition system, Face.com has built two Facebook apps that can scan photos and tag them for you. The company also offers an API for developers to use its facial recognition technology in the apps they build.

Its latest update to the API can scan a photo and supposedly determine a person’s minimum age, maximum age, and estimated age. It might not be spot-on accurate, but it could get close enough to determine your age group.

“Instead of trying to define what makes a person young or old, we provide our algorithms with a ton of data and the system can reverse engineer what makes someone young or old,” Face.com chief executive Gil Hirsch told VentureBeat in an interview. ”We use the general structure of a face to determine age. As humans, our features are either heighten or soften depending on the age. Kids have round, soft faces and as we age, we have elongated faces.”

The algorithms also take wrinkles, facial smoothness, and other telling age signs into account to place each scanned face into a general age group. The accuracy, Hirsch told me, is determined by how old a person looks, not necessarily how old they actually are. The API also provides a confidence level on how well it could determine the age, based on image quality and how the person looks in photo, i.e. if they are turned to one side or are making a strange face.

“Adults are much harder to figure out [their age], especially celebrities. On average, humans are much better at detecting ages than machines,” said Hirsch.

The hope is to build the technology into apps that restrict or tailor content based on age. For example the API could be built into a Netflix app, scan a child’s face when they open the app, determine they’re too young to watch The Hangover, and block it. Or — and this is where the tech could get futuristic and creepy — a display with a camera could scan someone’s face when they walk into a store and deliver ads based on their age.

In addition to the age-detection feature, Face.com says it has updated its API with 30 percent better facial recognition accuracy and new recognition algorithms. The updates were announced Thursday and the API is available for any developer to use.

One developer has already used the API to build app called Age Meter, which is available in the Apple App Store. On its iTunes page, the entertainment-purposes-only app shows pictures of Justin Bieber and Barack Obama with approximate ages above their photos.

Other companies in this space include Cognitec, with its FaceVACS software development kit, and Bayometric, which offers FaceIt Face Recognition. Google has also developed facial-recognition technology for Android 4.0 and Apple applied for a facial recognition patent last year.

The technology behind scanning someone’s picture, or even their face, to figure out their age still needs to be developed for complete accuracy. But, the day when bouncers and liquor store cashiers can use an app to scan a fake ID’s holder’s face, determine that they are younger than the legal drinking age, and refuse to sell them wine coolers may not be too far off.

Thursday, March 15. 2012

MIT App Inventor open beta preview debuts

Via Slash Gear

-----

Back in January, we talked a bit about the new MIT App Inventor software aimed at helping people that aren’t developers to build their own apps. MIT promised to have App Inventor available in Q1 of 2012. The first quarter is quickly winding down, and it was looking a bit like MIT might not make its self-imposed deadline.

MIT has now announced that it is meeting the goal of making App Inventor available as a public service in Q1. The App Inventor software has been in closed testing the last two months with 5000 users. The App Inventor software is now available in open beta to anyone who has a Google ID to login, such as a Gmail account.

MIT points out that the software is suitable for any use, but users need to be aware that this will be the first time the system is loaded so heavily, which could cause issues. MIT suggests that users make backups of important apps as the service ramps up with more and more users, in case there are issues. MIT also notes that it is still working on fixing remaining glitches and other errors.

We owe a large debt to our testers of the past few months; it’s been their feedback that’s given us the confidence for today’s announcement. And we’re tremendously grateful to the folks who have been running their own system with the MIT JAR files. Their experiences have been an invaluable source of information, and their work has been critical in keeping App Inventor alive while the MIT service was not yet available. We also want to acknowledge the growing group of developers who are starting to explore the App Inventor source code. They are the seeds of an open source community that we hope will take App Inventor beyond anything we could do by ourselves at MIT. And our extreme gratitude and admiration goes to the Google App Inventor team who, even while their project transitions out of Google, have continued to share their expertise and the fruit of their hard work of the past three years.

Monday, March 12. 2012

Mozilla’s Boot 2 Gecko and why it could change the world

Via Know Your Mobile

-----

‘Android is not open source.’

That’s what Mozilla’s Director of Research Andreas Gal thinks of Google’s purportedly ‘open source’ mobile operating system. In Gal’s view Google’s platform is no different from Apple’s iOS. The entire platform – including its design, development, and direction – is ‘dominated by Google.’

According to Gal, ‘Google makes all of the technological decisions behind closed doors and pushes them outwards. You may or may not get a look at the source after the device comes out. But it’s certainly not open. And in this sense it’s no different from Apple’s platform, except that maybe sometimes you get access to the source.’

And this is where Mozilla comes into the equation. Boot 2 Gecko is based solely on HTML5, JavaScript and CSS and is completely open source. Mozilla doesn’t even keep a ‘physical’ copy of the source code in its offices – everything to do with the platform is available online for all to see.

Brendan Eich, Mozilla’s co-founder (and the inventor of JavaScript), told Know Your Mobile that the days of native shells (iOS/Android) and proprietary software (Objective-C) could soon be over as Mozilla continues to standardise and implement Open Web APIs that will one day eradicate the need for separate platforms, allowing users to find and use apps on their mobiles without having to opt into a privately owned platform.

‘Separate platforms are no longer necessary once you have the correct standardisation and inter-operation,’ said Eich.

Apple’s iOS, Microsoft’s Windows Phone, RIM’s BlackBerry OS 10 and Google’s Android operating systems are all ‘walled gardens,’ according to Gal, meaning that all of the above are in it for one reason: to make money.

‘Google builds Android not for your benefit but for Google’s benefit, and the shareholders it has to satisfy. This is the same with Apple,’ said Gal. He added: ‘Mozilla is very different – we are a non-profit organisation. In the past Mozilla was all about making the web better. But now people are going to mobile, so we’re following them there.’

‘What we’ve developed [with B2G] is a completely open stack that is 100 per cent free. We have a publicly visible repository and all the development happens in the open. We use completely open standards and there’s no propriety software or technology involved.’

So what is Mozilla getting at here? Simple: dump the standard smartphone operating system, forget Apple and Google, and embrace the freedom of pure HTML5.

Gal tells us that because the B2G stack is based on HTML5 there are literally millions of developers out there that know how to create content for the platform. There will also be plenty of opportunities for developers to make money from their creations as well, according to Gal.

Google and Mozilla have developed technology that lets web developers manifest their entire site, including payment methods, into an icon that can be placed on a B2G device’s homescreen.

But all this, Gal tells us, is still work in progress. Boot 2 Gecko is still in its embryonic stages at present – but the ball has certainly begun rolling.

‘We’re working with operators to create an easy way for customers to pay for content,’ said Gal. ‘Mobile users want to go to a store, discover content and pay for it easily. We’re working on making this a reality inside B2G via personal identity systems.’

Persona, featuring BrowserID, is one such personal identity system. Persona lets users use their email address and a single password to sign in or buy materials and media. Mozilla demoed Persona at MWC 2012.

‘You own your applications. You own your data and you have the power to take them wherever you like,’ said Eich. ‘And this will be dependent on things like Persona, which is the most secure and safe password free sign-on and the identity providers don’t see all of your details like they would with Facebook Connect, for instance.’

He added: ‘the end result is an “unwalled garden” where you’re free to move around without being forced into opting fully into one platform.’

But what’s most impressive about B2G is how well it runs on low-end hardware. During our meeting with Gal and Eich, we got a demo of B2G running incredibly smoothly on a $60 handset with a 600Mhz CPU and just 128MB of RAM. Gaming, web browsing, video and typing were all seamless.

Gal also confirmed that Qualcomm is partnering with Mozilla on its B2G project.

B2G is based on the same web-rendering engine as Mozilla’s Firefox browser, meaning that it is extremely lightweight when compared to Android and iOS. For this reason getting smartphone-level performance out of a budget mobile handset suddenly becomes a reality.

‘There are so many opportunities for technology like this [B2G] in emerging countries. What people are looking for there is a solid smartphone experience – browsing, web browsing and applications – at a decent price point. Users’ in India, for instance, cannot afford Google’s quad-core devices but they could afford a $60 HTML5-powered B2G handset.’

‘Google’s Android platform is too hardware dependent,’ says Gal. ‘Android 4.0 demands 512MB of RAM as a minimum for instance. Mozilla’s web stack allows OEMs to produce $60 handsets with smartphone-like performance,’ said Gal.

He added: ‘But of course if you add in extra hardware for higher tier phones, the performance will only get better.’

Thursday, February 16. 2012

Google Sky Map development ends, app goes open source

-----

If you’re a fan of Google’s augmented reality astronomy app Google Sky Map, I’ve got good news and bad news for you. Google announced that major development on the app has ended, so there will be no more major official releases from the company. On the plus side, they’ve decided to release the open-source code for Sky Map, so given enough developer interest it should be around for quite some time.

Sky Map started as one of Google’s famous 20% projects, which six of its employees launched by working in their company-sponsored spare time. The application was one of Android’s first showpiece apps, combining basic astronomical data overlaid on a smartphone camera to easily identify constellations, planets and other heavenly bodies by simply pointing the phone towards the sky. The free app has been downloaded over 10 million times from the Android Market.

Google is working with Carnegie Melon University so that its students can continue direct development. The company didn’t say if direct updated with computer scientist students’ code would make it into the android Market, but it’s a pretty safe bet. If you’ d like to give it a try for yourself, you can download the open-source code here. I fully expect a Star Trek themed version of Sky Map in the next few weeks which will allow me to view the Alpha Quadrant from my smartphone – get to it, devs.

Tuesday, February 07. 2012

It's Kinect day! The Kinect For Windows SDK v1 is out!

Via Channel 9

-----

As everyone reading this blog, and those in the Kinect for Windows space, knows today is a big day. From what was a cool peripheral for the XBox 360 last year, the Kinect for Windows SDK and now dedicated Kinect for Windows hardware device, has taken the world by storm. In the last year we've seen some simply amazing ideas and projects, many highlighted here in the Kinect for Windows Gallery, from health to education, to music expression to simply just fun.

And that was all with beta software and a device meant for a gaming console.

With a fully supported, allowed for use in commercial products, dedicated device and updated SDK, today the world changes again.

Welcome to the Kinect for Windows SDK v1!

Kinect for Windows is now Available!

On January 9th, Steve Ballmer announced at CES that we would be shipping Kinect for Windows on February 1st. I am very pleased to report that today version 1.0 of our SDK and runtime were made available for download, and distribution partners in our twelve launch countries are starting to ship Kinect for Windows hardware, enabling companies to start to deploy their solutions. The suggested retail price is $249, and later this year, we will offer special academic pricing of $149 for Qualified Educational Users.

In the three months since we released Beta 2, we have made many improvements to our SDK and runtime, including:

- Support for up to four Kinect sensors plugged into the same computer

- Significantly improved skeletal tracking, including the ability for developers to control which user is being tracked by the sensor

- Near Mode for the new Kinect for Windows hardware, which enables the depth camera to see objects as close as 40 centimeters in front of the device

- Many API updates and enhancements in the managed and unmanaged runtimes

- The latest Microsoft Speech components (V11) are now included as part of the SDK and runtime installer

- Improved “far-talk” acoustic model that increases speech recognition accuracy

- New and updated samples, such as Kinect Explorer, which enables developers to explore the full capabilities of the sensor and SDK, including audio beam and sound source angles, color modes, depth modes, skeletal tracking, and motor controls

- A commercial-ready installer which can be included in an application’s set-up program, making it easy to install the Kinect for Windows runtime and driver components for end-user deployments.

- Robustness improvements including driver stability, runtime fixes, and audio fixes

More details can be found here.

If you're like me, you want to know more about what's new... So here's a snip from the Kinect for Windows SDK v1 Release Notes;

5. Changes since the Kinect for Windows SDK Beta 2 release

- Support for up to 4 Kinect sensors plugged into the same computer, assuming the computer is powerful enough and they are plugged in to different USB controllers so that there is enough bandwidth available. (As before, skeletal tracking can only be used on one Kinect per process. The developer can choose which Kinect sensor.)

· Skeletal Tracking

- The Kinect for Windows Skeletal Tracking system is now tracking subjects with results equivalent to the Skeletal Tracking library available in the November 2011 Xbox 360 Development Kit.

- The Near Mode feature is now available. It is only functional on Kinect for Windows Hardware; see the Kinect for Windows Blog post for more information.

- Robustness improvement including driver stability, runtime and audio fixes.

- API Updates and Enhancements

- See a blog post detailing migration information from Beta 2 to v1.0 here: Migrating from Beta 2

- Many renaming changes to both the managed and native APIs for consistency and ease of development. Changes include:

- Consolidation of managed and native runtime components into a minimal set of DLLs

- Renaming of managed and native APIs to align with product team design guidelines

- Renaming of headers, libs, and references assemblies

- Significant managed API improvements:

- Consolidation of namespaces into Microsoft.Kinect

- Improvements to DepthData object

- Skeleton data is now serializable

- Audio API improvements, including the ability to connect to a specific Kinect on a computer with multiple Kinects

- Improved error handling

- Improved initialization APIs, including addition the Initializing state into the Status property and StatusChanged events

- Set Tracked Skeleton API support is now available in native and managed code. Developers can use this API to lock on to 1 or 2 skeletons, among the possible 6 proposed.

- Mapping APIs: The mapping APIs on KinectSensor that allow you to map depth pixels to color pixels have been updated for simplicity of usage, and are no longer restricted to 320x240 depth format.

- The high-res RGB color mode of 1280x1024 has been replaced by the similar 1280x960 mode, because that is the mode supported by the official Kinect for Windows hardware.

- Frame event improvements. Developers now receive frame events in the same order as Xbox 360, i.e. color then depth then skeleton, followed by an AllFramesReady event when all data frames are available.

- Managed API Updates

Correct FPS for High Res Mode

ColorImageFormat.RgbResolution1280x960Fps15 to ColorImageFormat.RgbResolution1280x960Fps12

Enum Polish

Added Undefined enum value to a few Enums: ColorImageFormat, DepthImageFormat, and KinectStatus

Depth Values

DepthImageStream now defaults IsTooFarRangeEnabled to true (and removed the property).

Beyond the depth values that are returnable (800-4000 for DepthRange.Default and 400-3000 for DepthRange.Near), we also will return the following values:

DepthImageStream.TooNearDepth (for things that we know are less than the DepthImageStream.MinDepth)

DepthImageStream.TooFarDepth (for things that we know are more than the DepthImageStream.MaxDepth)

DepthImageStream.UnknownDepth (for things that we don’t know.)

Serializable Fixes for Skeleton Data

We’ve added the SerializableAttribute on Skeleton, JointCollection, Joint and SkeletonPoint

Mapping APIs

Performance improvements to the existing per pixel API.

Added a new API for doing full-frame conversions:

public void MapDepthFrameToColorFrame(DepthImageFormat depthImageFormat, short[] depthPixelData, ColorImageFormat colorImageFormat, ColorImagePoint[] colorCoordinates);

Added KinectSensor.MapSkeletonPointToColor()

public ColorImagePoint MapSkeletonPointToColor(SkeletonPoint skeletonPoint, ColorImageFormat colorImageFormat);

Misc

Renamed Skeleton.Quality to Skeleton.ClippedEdges

Changed return type of SkeletonFrame.FloorClipPlane to Tuple<int, int, int, int>.

Removed SkeletonFrame.NormalToGravity property.

· Audio & Speech

- The Kinect SDK now includes the latest Microsoft Speech components (V11 QFE). Our runtime installer chain-installs the appropriate runtime components (32-bit speech runtime for 32-bit Windows, and both 32-bit and 64-bit speech runtimes for 64-bit Windows), plus an updated English Language pack (en-us locale) with improved recognition accuracy.

- Updated acoustic model that improves the accuracy in the confidence numbers returned by the speech APIs

- Kinect Speech Acoustic Model has now the same icon and similar description as the rest of the Kinect components

- Echo cancellation will now recognize the system default speaker and attempt to cancel the noise coming from it automatically, if enabled.

- Kinect Audio with AEC enabled now works even when no sound is coming from the speakers. Previously, this case caused problems.

- Audio initialization has changed:

- C++ code must call NuiInitialize before using the audio stream

- Managed code must call KinectSensor.Start() before KinectAudioSource.Start()

- It takes about 4 seconds after initialize is called before audio data begins to be delivered

- Audio/Speech samples now wait for 4 seconds for Kinect device to be ready before recording audio or recognizing speech.

· Samples

- A sample browser has been added, making it easier to find and view samples. A link to it is installed in the Start menu.

- ShapeGame and KinectAudioDemo (via a new KinectSensorChooser component) demonstrate how to handle Kinect Status as well as inform users about erroneously trying to use a Kinect for Xbox 360 sensor.

- The Managed Skeletal Viewer sample has been replaced by Kinect Explorer, which adds displays for audio beam angle and sound source angle/confidence, and provides additional control options for the color modes, depth modes, skeletal tracking options, and motor control. Click on “(click for settings)” at the bottom of the screen for all the bells and whistles.

- Kinect Explorer (via an improved SkeletonViewer component) displays bones and joints differently, to better illustrate which joints are tracked with high confidence and which are not.

- KinectAudioDemo no longer saves unrecognized utterances files in temp folder.

- An example of AEC and Beam Forming usage has been added to the KinectAudioDemo application.

- Redistributable Kinect for Windows Runtime package

- There is a redist package, located in the redist subdirectory of the SDK install location. This redist is an installer exe that an application can include in its setup program, which installs the Kinect for Windows runtime and driver components.

Thursday, February 02. 2012

Introducing the HUD. Say hello to the future of the menu.

-----

The desktop remains central to our everyday work and play, despite all the excitement around tablets, TV’s and phones. So it’s exciting for us to innovate in the desktop too, especially when we find ways to enhance the experience of both heavy “power” users and casual users at the same time. The desktop will be with us for a long time, and for those of us who spend hours every day using a wide diversity of applications, here is some very good news: 12.04 LTS will include the first step in a major new approach to application interfaces.

This work grows out of observations of new and established / sophisticated users making extensive use of the broader set of capabilities in their applications. We noticed that both groups of users spent a lot of time, relatively speaking, navigating the menus of their applications, either to learn about the capabilities of the app, or to take a specific action. We were also conscious of the broader theme in Unity design of leading from user intent. And that set us on a course which lead to today’s first public milestone on what we expect will be a long, fruitful and exciting journey.

The menu has been a central part of the GUI since Xerox PARC invented ‘em in the 70?s. It’s the M in WIMP and has been there, essentially unchanged, for 30 years.

We can do much better!

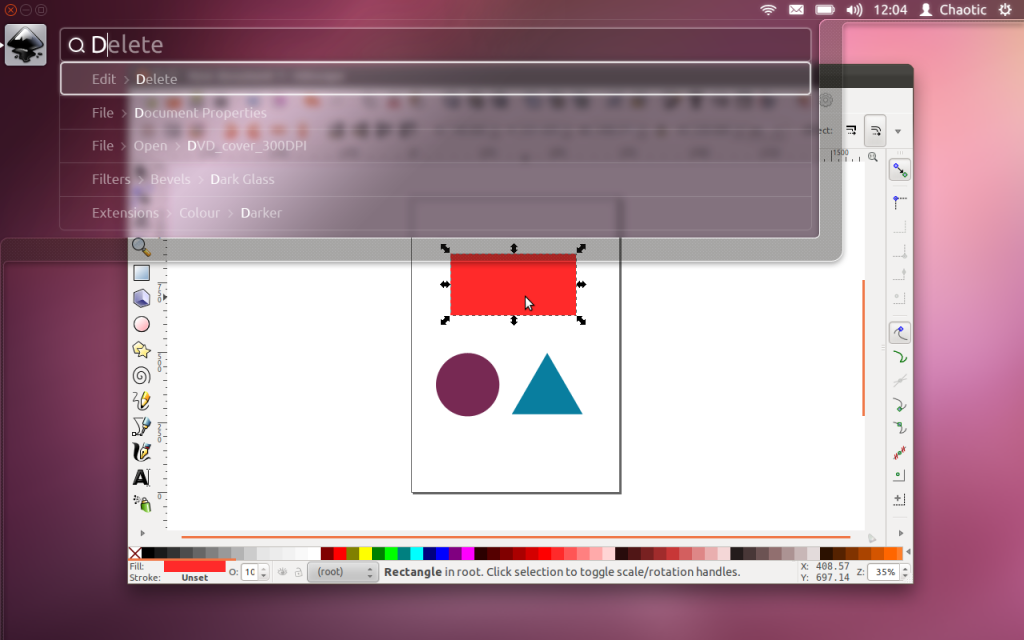

Say hello to the Head-Up Display, or HUD, which will ultimately replace menus in Unity applications. Here’s what we hope you’ll see in 12.04 when you invoke the HUD from any standard Ubuntu app that supports the global menu:

The intenterface – it maps your intent to the interface

This is the HUD. It’s a way for you to express your intent and have the application respond appropriately. We think of it as “beyond interface”, it’s the “intenterface”. This concept of “intent-driven interface” has been a primary theme of our work in the Unity shell, with dash search as a first class experience pioneered in Unity. Now we are bringing the same vision to the application, in a way which is completely compatible with existing applications and menus.

The HUD concept has been the driver for all the work we’ve done in unifying menu systems across Gtk, Qt and other toolkit apps in the past two years. So far, that’s shown up as the global menu. In 12.04, it also gives us the first cut of the HUD.

Menus serve two purposes. They act as a standard way to invoke commands which are too infrequently used to warrant a dedicated piece of UI real-estate, like a toolbar button, and they serve as a map of the app’s functionality, almost like a table of contents that one can scan to get a feel for ‘what the app does’. It’s command invocation that we think can be improved upon, and that’s where we are focusing our design exploration.

As a means of invoking commands, menus have some advantages. They are always in the same place (top of the window or screen). They are organised in a way that’s quite easy to describe over the phone, or in a text book (“click the Edit->Preferences menu”), they are pretty fast to read since they are generally arranged in tight vertical columns. They also have some disadvantages: when they get nested, navigating the tree can become fragile. They require you to read a lot when you probably already know what you want. They are more difficult to use from the keyboard than they should be, since they generally require you to remember something special (hotkeys) or use a very limited subset of the keyboard (arrow navigation). They force developers to make often arbitrary choices about the menu tree (“should Preferences be in Edit or in Tools or in Options?”), and then they force users to make equally arbitrary effort to memorise and navigate that tree.

The HUD solves many of these issues, by connecting users directly to what they want. Check out the video, based on a current prototype. It’s a “vocabulary UI”, or VUI, and closer to the way users think. “I told the application to…” is common user paraphrasing for “I clicked the menu to…”. The tree is no longer important, what’s important is the efficiency of the match between what the user says, and the commands we offer up for invocation.

In 12.04 LTS, the HUD is a smart look-ahead search through the app and system (indicator) menus. The image is showing Inkscape, but of course it works everywhere the global menu works. No app modifications are needed to get this level of experience. And you don’t have to adopt the HUD immediately, it’s there if you want it, supplementing the existing menu mechanism.

It’s smart, because it can do things like fuzzy matching, and it can learn what you usually do so it can prioritise the things you use often. It covers the focused app (because that’s where you probably want to act) as well as system functionality; you can change IM state, or go offline in Skype, all through the HUD, without changing focus, because those apps all talk to the indicator system. When you’ve been using it for a little while it seems like it’s reading your mind, in a good way.

We’ll resurrect the (boring) old ways of displaying the menu in 12.04, in the app and in the panel. In the past few releases of Ubuntu, we’ve actively diminished the visual presence of menus in anticipation of this landing. That proved controversial. In our defence, in user testing, every user finds the menu in the panel, every time, and it’s obviously a cleaner presentation of the interface. But hiding the menu before we had the replacement was overly aggressive. If the HUD lands in 12.04 LTS, we hope you’ll find yourself using the menu less and less, and be glad to have it hidden when you are not using it. You’ll definitely have that option, alongside more traditional menu styles.

Voice is the natural next step

Searching is fast and familiar, especially once we integrate voice recognition, gesture and touch. We want to make it easy to talk to any application, and for any application to respond to your voice. The full integration of voice into applications will take some time. We can start by mapping voice onto the existing menu structures of your apps. And it will only get better from there.

But even without voice input, the HUD is faster than mousing through a menu, and easier to use than hotkeys since you just have to know what you want, not remember a specific key combination. We can search through everything we know about the menu, including descriptive help text, so pretty soon you will be able to find a menu entry using only vaguely related text (imagine finding an entry called Preferences when you search for “settings”).

There is lots to discover, refine and implement. I have a feeling this will be a lot of fun in the next two years

Even better for the power user

The results so far are rather interesting: power users say things

like “every GUI app now feels as powerful as VIM”. EMACS users just

grunt and… nevermind  . Another comment was “it works so well that the rare occasions when it

can’t read my mind are annoying!”. We’re doing a lot of user testing on

heavy multitaskers, developers and all-day-at-the-workstation personas

for Unity in 12.04, polishing off loose ends in the experience that

frustrated some in this audience in 11.04-10. If that describes you, the

results should be delightful. And the HUD should be particularly

empowering.

. Another comment was “it works so well that the rare occasions when it

can’t read my mind are annoying!”. We’re doing a lot of user testing on

heavy multitaskers, developers and all-day-at-the-workstation personas

for Unity in 12.04, polishing off loose ends in the experience that

frustrated some in this audience in 11.04-10. If that describes you, the

results should be delightful. And the HUD should be particularly

empowering.

Even casual users find typing faster than mousing. So while there are modes of interaction where it’s nice to sit back and drive around with the mouse, we observe people staying more engaged and more focused on their task when they can keep their hands on the keyboard all the time. Hotkeys are a sort of mental gymnastics, the HUD is a continuation of mental flow.

Ahead of the competition

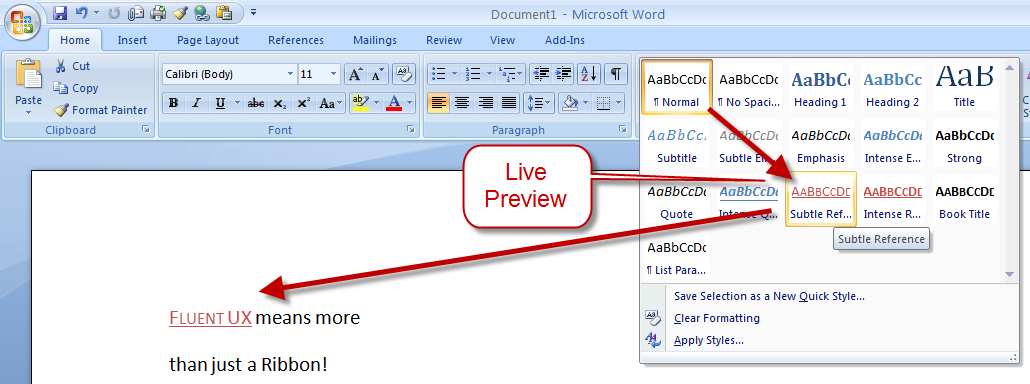

There are other teams interested in a similar problem space. Perhaps the best-known new alternative to the traditional menu is Microsoft’s Ribbon. Introduced first as part of a series of changes called Fluent UX in Office, the ribbon is now making its way to a wider set of Windows components and applications. It looks like this:

You can read about the ribbon from a supporter (like any UX change, it has its supporters and detractors  ) and if you’ve used it yourself, you will have your own opinion about

it. The ribbon is highly visual, making options and commands very

visible. It is however also a hog of space (I’m told it can be

minimised). Our goal in much of the Unity design has been to return

screen real estate to the content with which the user is working; the

HUD meets that goal by appearing only when invoked.

) and if you’ve used it yourself, you will have your own opinion about

it. The ribbon is highly visual, making options and commands very

visible. It is however also a hog of space (I’m told it can be

minimised). Our goal in much of the Unity design has been to return

screen real estate to the content with which the user is working; the

HUD meets that goal by appearing only when invoked.

Instead of cluttering up the interface ALL the time, let’s clear out the chrome, and show users just what they want, when they want it.

Time will tell whether users prefer the ribbon, or the HUD, but we think it’s exciting enough to pursue and invest in, both in R&D and in supporting developers who want to take advantage of it.

Other relevant efforts include Enso and Ubiquity from the original Humanized team (hi Aza &co), then at Mozilla.

Our thinking is inspired by many works of science, art and entertainment; from Minority Report to Modern Warfare and Jef Raskin’s Humane Interface. We hope others will join us and accelerate the shift from pointy-clicky interfaces to natural and efficient ones.

Roadmap for the HUD

There’s still a lot of design and code still to do. For a start, we haven’t addressed the secondary aspect of the menu, as a visible map of the functionality in an app. That discoverability is of course entirely absent from the HUD; the old menu is still there for now, but we’d like to replace it altogether not just supplement it. And all the other patterns of interaction we expect in the HUD remain to be explored. Regardless, there is a great team working on this, including folk who understand Gtk and Qt such as Ted Gould, Ryan Lortie, Gord Allott and Aurelien Gateau, as well as designers Xi Zhu, Otto Greenslade, Oren Horev and John Lea. Thanks to all of them for getting this initial work to the point where we are confident it’s worthwhile for others to invest time in.

We’ll make sure it’s easy for developers working in any toolkit to take advantage of this and give their users a better experience. And we’ll promote the apps which do it best – it makes apps easier to use, it saves time and screen real-estate for users, and it creates a better impression of the free software platform when it’s done well.

From a code quality and testing perspective, even though we consider this first cut a prototype-grown-up, folk will be glad to see this:

Overall coverage rate: lines......: 87.1% (948 of 1089 lines) functions..: 97.7% (84 of 86 functions) branches...: 63.0% (407 of 646 branches)

Landing in 12.04 LTS is gated on more widespread testing. You can of course try this out from a PPA or branch the code in Launchpad (you will need these two branches). Or dig deeper with blogs on the topic from Ted Gould, Olli Ries and Gord Allott. Welcome to 2012 everybody!

Wednesday, February 01. 2012

Killed by Code: Software Transparency in Implantable Medical Devices

Via Software Freedom Law Center

-----

Copyright © 2010, Software Freedom Law Center. Verbatim copying of this document is permitted in any medium; this notice must be preserved on all copies. †

I Abstract

Software is an integral component of a range of devices that perform critical, lifesaving functions and basic daily tasks. As patients grow more reliant on computerized devices, the dependability of software is a life-or-death issue. The need to address software vulnerability is especially pressing for Implantable Medical Devices (IMDs), which are commonly used by millions of patients to treat chronic heart conditions, epilepsy, diabetes, obesity, and even depression.

The software on these devices performs life-sustaining functions such as cardiac pacing and defibrillation, drug delivery, and insulin administration. It is also responsible for monitoring, recording and storing private patient information, communicating wirelessly with other computers, and responding to changes in doctors’ orders.

The Food and Drug Administration (FDA) is responsible for evaluating the risks of new devices and monitoring the safety and efficacy of those currently on market. However, the agency is unlikely to scrutinize the software operating on devices during any phase of the regulatory process unless a model that has already been surgically implanted repeatedly malfunctions or is recalled.

The FDA has issued 23 recalls of defective devices during the first half of 2010, all of which are categorized as “Class I,” meaning there is “reasonable probability that use of these products will cause serious adverse health consequences or death.” At least six of the recalls were likely caused by software defects.1 Physio-Control, Inc., a wholly owned subsidiary of Medtronic and the manufacturer of one defibrillator that was probably recalled due to software-related failures, admitted in a press release that it had received reports of similar failures from patients “over the eight year life of the product,” including one “unconfirmed adverse patient event.”2

Despite the crucial importance of these devices and the absence of comprehensive federal oversight, medical device software is considered the exclusive property of its manufacturers, meaning neither patients nor their doctors are permitted to access their IMD’s source code or test its security.3

In 2008, the Supreme Court of the United States’ ruling in Riegel v. Medtronic, Inc. made people with IMDs even more vulnerable to negligence on the part of device manufacturers.4 Following a wave of high-profile recalls of defective IMDs in 2005, the Court’s decision prohibited patients harmed by defects in FDA-approved devices from seeking damages against manufacturers in state court and eliminated the only consumer safeguard protecting patients from potentially fatal IMD malfunctions: product liability lawsuits. Prevented from recovering compensation from IMD-manufacturers for injuries, lost wages, or health expenses in the wake of device failures, people with chronic medical conditions are now faced with a stark choice: trust manufacturers entirely or risk their lives by opting against life-saving treatment.

We at the Software Freedom Law Center (SFLC) propose an unexplored solution to the software liability issues that are increasingly pressing as the population of IMD-users grows--requiring medical device manufacturers to make IMD source-code publicly auditable. As a non-profit legal services organization for Free and Open Source (FOSS) software developers, part of the SFLC’s mission is to promote the use of open, auditable source code5 in all computerized technology. This paper demonstrates why increased transparency in the field of medical device software is in the public’s interest. It unifies various research into the privacy and security risks of medical device software and the benefits of published systems over closed, proprietary alternatives. Our intention is to demonstrate that auditable medical device software would mitigate the privacy and security risks in IMDs by reducing the occurrence of source code bugs and the potential for malicious device hacking in the long-term. Although there is no way to eliminate software vulnerabilities entirely, this paper demonstrates that free and open source medical device software would improve the safety of patients with IMDs, increase the accountability of device manufacturers, and address some of the legal and regulatory constraints of the current regime.

We focus specifically on the security and privacy risks of implantable medical devices, specifically pacemakers and implantable cardioverter defibrillators, but they are a microcosm of the wider software liability issues which must be addressed as we become more dependent on embedded systems and devices. The broader objective of our research is to debunk the “security through obscurity” misconception by showing that vulnerabilities are spotted and fixed faster in FOSS programs compared to proprietary alternatives. The argument for public access to source code of IMDs can, and should be, extended to all the software people interact with everyday. The well-documented recent incidents of software malfunctions in voting booths, cars, commercial airlines, and financial markets are just the beginning of a problem that can only be addressed by requiring the use of open, auditable source code in safety-critical computerized devices.6

In section one, we give an overview of research related to potentially fatal software vulnerabilities in IMDs and cases of confirmed device failures linked to source code vulnerabilities. In section two, we summarize research on the security benefits of FOSS compared to closed-source, proprietary programs. In section three, we assess the technical and legal limitations of the existing medical device review process and evaluate the FDA’s capacity to assess software security. We conclude with our recommendations on how to promote the use of FOSS in IMDs. The research suggests that the occurrence of privacy and security breaches linked to software vulnerabilities is likely to increase in the future as embedded devices become more common.

II Software Vulnerabilities in IMDs

A variety of wirelessly reprogrammable IMDs are surgically implanted directly into the body to detect and treat chronic health conditions. For example, an implantable cardioverter defibrillator, roughly the size of a small mobile phone, connects to a patient’s heart, monitors rhythm, and delivers an electric shock when it detects abnormal patterns. Once an IMD has been implanted, health care practitioners extract data, such as electrocardiogram readings, and modify device settings remotely, without invasive surgery. New generation ICDs can be contacted and reprogrammed via wireless radio signals using an external device called a “programmer.”

In 2008, approximately 350,000 pacemakers and 140,000 ICDs were implanted in the United States, according to a forecast on the implantable medical device market published earlier this year.7 Nation-wide demand for all IMDs is projected to increase 8.3 percent annually to $48 billion by 2014, the report says, as “improvements in safety and performance properties …enable ICDs to recapture growth opportunities lost over the past year to product recall.”8 Cardiac implants in the U.S. will increase 7.3 percent annually, the article predicts, to $16.7 billion in 2014, and pacing devices will remain the top-selling group in this category.9

Though the surge in IMD treatment over the past decade has had undeniable health benefits, device failures have also had fatal consequences. From 1997 to 2003, an estimated 400,000 to 450,000 ICDs were implanted world-wide and the majority of the procedures took place in the United States. At least 212 deaths from device failures in five different brands of ICD occurred during this period, according to a study of the adverse events reported to the FDA conducted by cardiologists from the Minneapolis Heart Institute Foundation.10

Research indicates that as IMD usage grows, the frequency of potentially fatal software glitches, accidental device malfunctions, and the possibility of malicious attacks will grow. While there has yet to be a documented incident in which the source code of a medical device was breached for malicious purposes, a 2008-study led by software engineer and security expert Kevin Fu proved that it is possible to interfere with an ICD that had passed the FDA’s premarket approval process and been implanted in hundreds of thousands of patients. A team of researchers from three universities partially reverse-engineered the communications protocol of a 2003-model ICD and launched several radio-based software attacks from a short distance. Using low-cost, commercially available equipment to bypass the device programmer, the researchers were able to extract private data stored inside the ICD such as patients’ vital signs and medical history; “eavesdrop” on wireless communication with the device programmer; reprogram the therapy settings that detect and treat abnormal heart rhythms; and keep the device in “awake” mode in order to deplete its battery, which can only be replaced with invasive surgery.

In one experimental attack conducted in the study, researchers were able to first disable the ICD to prevent it from delivering a life-saving shock and then direct the same device to deliver multiple shocks averaging 137.7 volts that would induce ventricular fibrillation in a patient. The study concluded that there were no “technological mechanisms in place to ensure that programmers can only be operated by authorized personnel.” Fu’s findings show that almost anyone could use store-bought tools to build a device that could “be easily miniaturized to the size of an iPhone and carried through a crowded mall or subway, sending its heart-attack command to random victims.”11

The vulnerabilities Fu’s lab exploited are the result of the very same features that enable the ICD to save lives. The model breached in the experiment was designed to immediately respond to reprogramming instructions from health-care practitioners, but is not equipped to distinguish whether treatment requests originate from a doctor or an adversary. An earlier paper co-authored by Fu proposed a solution to the communication-security paradox. The paper recommends the development of a wearable “cloaker” for IMD-patients that would prevent anyone but pre-specified, authorized commercial programmers to interact with the device. In an emergency situation, a doctor with a previously unauthorized commercial programmer would be able to enable emergency access to the IMD by physically removing the cloaker from the patient.12

Though the adversarial conditions demonstrated in Fu’s studies were hypothetical, two early incidents of malicious hacking underscore the need to address the threat software liabilities pose to the security of IMDs. In November 2007, a group of attackers infiltrated the Coping with Epilepsy website and planted flashing computer animations that triggered migraine headaches and seizures in photosensitive site visitors.13 A year later, malicious hackers mounted a similar attack on the Epilepsy Foundation website.14

Ample evidence of software vulnerabilities in other common IMD treatments also indicates that the worst-case scenario envisioned by Fu’s research is not unfounded. From 1983 to 1997 there were 2,792 quality problems that resulted in recalls of medical devices, 383 of which were related to computer software, according to a 2001 study analyzing FDA reports of the medical devices that were voluntarily recalled by manufacturers over a 15-year period.15 Cardiology devices accounted for 21 percent of the IMDs that were recalled. Authors Dolores R. Wallace and D. Richard Kuhn discovered that 98 percent of the software failures they analyzed would have been detected through basic scientific testing methods. While none of the failures they researched caused serious personal injury or death, the paper notes that there was not enough information to determine the potential health consequences had the IMDs remained in service.

Nearly 30 percent of the software-related recalls investigated in the report occurred between 1994 and 1996. “One possibility for this higher percentage in later years may be the rapid increase of software in medical devices. The amount of software in general consumer products is doubling every two to three years,” the report said.

As more individual IMDs are designed to automatically communicate wirelessly with physician’s offices, hospitals, and manufacturers, routine tasks like reprogramming, data extraction, and software updates may spur even more accidental software glitches that could compromise the security of IMDs.

The FDA launched an “Infusion Pump Improvement Initiative” in April 2010, after receiving thousands of reports of problems associated with the use of infusion pumps that deliver fluids such as insulin and chemotherapy medication to patients electronically and mechanically.16 Between 2005 and 2009, the FDA received approximately 56,000 reports of adverse events related to infusion pumps, including numerous cases of injury and death. The agency analyzed the reports it received during the period in a white paper published in the spring of 2010 and found that the most common types of problems reported were associated with software defects or malfunctions, user interface issues, and mechanical or electrical failures. (The FDA said most of the pumps covered in the report are operated by a trained user, who programs the rate and duration of fluid delivery through a built-in software interface). During the period, 87 infusion pumps were recalled due to safety concerns, 14 of which were characterized as “Class I” – situations in which there is a reasonable probability that use of the recalled device will cause serious adverse health consequences or death. Software defects lead to over-and-under infusion and caused pre-programmed alarms on pumps to fail in emergencies or activate in absence of a problem. In one instance a “key bounce” caused an infusion pump to occasionally register one keystroke (e.g., a single zero, “0”) as multiple keystrokes (e.g., a double zero, “00”), causing an inappropriate dosage to be delivered to a patient. Though the report does not apply to implantable infusion pumps, it demonstrates the prevalence of software-related malfunctions in medical device software and the flexibility of the FDA’s pre-market approval process.

In order to facilitate the early detection and correction of any design defects, the FDA has begun offering manufacturers “the option of submitting the software code used in their infusion pumps for analysis by agency experts prior to premarket review of new or modified devices.” It is also working with third-party researchers to develop “an open-source software safety model and reference specifications that infusion pump manufacturers can use or adapt to verify the software in their devices.”

Though the voluntary initiative appears to be an endorsement of the safety benefits of FOSS and a step in the right direction, it does not address the overall problem of software insecurity since it is not mandatory.

III Why Free Software is More Secure

“Continuous and broad peer-review, enabled by publicly available source code, improves software reliability and security through the identification and elimination of defects that might otherwise go unrecognized by the core development team. Conversely, where source code is hidden from the public, attackers can attack the software anyway …. Hiding source code does inhibit the ability of third parties to respond to vulnerabilities (because changing software is more difficult without the source code), but this is obviously not a security advantage. In general, ‘Security by Obscurity’ is widely denigrated.” — Department of Defense (DoD) FAQ’s response to question: “Doesn’t Hiding Source Code Automatically Make Software More Secure?”17

The DoD indicates that FOSS has been central to its Information Technology (IT) operations since the mid-1990’s, and, according to some estimates, one-third to one-half of the software currently used by the agency is open source.18 The U.S. Office of Management and Budget issued a memorandum in 2004, which recommends that all federal agencies use the same procurement procedures for FOSS as they would for proprietary software.19 Other public sector agencies, such as the U.S. Navy, the Federal Aviation Administration, the U.S. Census Bureau and the U.S. Patent and Trademark Office have been identified as recognizing the security benefits of publicly auditable source code.20

To understand why free and open source software has become a common component in the IT systems of so many businesses and organizations that perform life-critical or mission-critical functions, one must first accept that software bugs are a fact of life. The Software Engineering Institute estimates that an experienced software engineer produces approximately one defect for every 100 lines of code.21 Based on this estimate, even if most of the bugs in a modest, one million-line code base are fixed over the course of a typical program life cycle, approximately 1,000 bugs would remain.

In its first “State of Software Security” report released in March 2010, the private software security analysis firm Veracode reviewed the source code of 1,591 software applications voluntarily submitted by commercial vendors, businesses, and government agencies.22 Regardless of program origins, Veracode found that 58 percent of all software submitted for review did not meet the security assessment criteria the report established.23 Based on its findings, Veracode concluded that “most software is indeed very insecure …[and] more than half of the software deployed in enterprises today is potentially susceptible to an application layer attack similar to that used in the recent …Google security breaches.”24

Though open source applications had almost as many source code vulnerabilities upon first submission as proprietary programs, researchers found that they contained fewer potential backdoors than commercial or outsourced software and that open source project teams remediated security vulnerabilities within an average of 36 days of the first submission, compared to 48 days for internally developed applications and 82 days for commercial applications.25 Not only were bugs patched the fastest in open source programs, but the quality of remediation was also higher than commercial programs.26

Veracode’s study confirms the research and anecdotal evidence into the security benefits of open source software published over the past decade. According to the web-security analysis site SecurityPortal, vulnerabilities took an average of 11.2 days to be spotted in Red Hat/Linux systems with a standard deviation of 17.5 compared to an average of 16.1 days with a standard deviation of 27.7 in Microsoft programs.27

Sun Microsystems’ COO Bill Vass summed up the most common case for FOSS in a blog post published in April 2009: “By making the code open source, nothing can be hidden in the code,” Vass wrote. “If the Trojan Horse was made of glass, would the Trojans have rolled it into their city? NO.”28

Vass’ logic is backed up by numerous research papers and academic studies that have debunked the myth of security through obscurity and advanced the “more eyes, fewer bugs” thesis. Though it might seem counterintuitive, making source code publicly available for users, security analysts, and even potential adversaries does not make systems more vulnerable to attack in the long-run. To the contrary, keeping source code under lock-and-key is more likely to hamstring “defenders” by preventing them from finding and patching bugs that could be exploited by potential attackers to gain entry into a given code base, whether or not access is restricted by the supplier.29 “In a world of rapid communications among attackers where exploits are spread on the Internet, a vulnerability known to one attacker is rapidly learned by others,” reads a 2006 article comparing open source and proprietary software use in government systems.30 “For Open Source, the next assumption is that disclosure of a flaw will prompt other programmers to improve the design of defenses. In addition, disclosure will prompt many third parties — all of those using the software or the system — to install patches or otherwise protect themselves against the newly announced vulnerability. In sum, disclosure does not help attackers much but is highly valuable to the defenders who create new code and install it.”

Academia and internet security professionals appear to have reached a consensus that open, auditable source code gives users the ability to independently assess the exposure of a system and the risks associated with using it; enables bugs to be patched more easily and quickly; and removes dependence on a single party, forcing software suppliers and developers to spend more effort on the quality of their code, as authors Jaap-Henk Hoepman and Bart Jacobs also conclude in their 2007 article, Increased Security Through Open Source.31

By contrast, vulnerabilities often go unnoticed, unannounced, and unfixed in closed source programs because the vendor, rather than users who have a higher stake in maintaining the quality of software, is the only party allowed to evaluate the security of the code base.32 Some studies have argued that commercial software suppliers have less of an incentive to fix defects after a program is initially released so users do not become aware of vulnerabilities until after they have caused a problem. “Once the initial version of [a proprietary software product] has saturated its market, the producer’s interest tends to shift to generating upgrades …Security is difficult to market in this process because, although features are visible, security functions tend to be invisible during normal operations and only visible when security trouble occurs.”33

The consequences of manufacturers’ failure to disclose malfunctions to patients and physicians have proven fatal in the past. In 2005, a 21-year-old man died from cardiac arrest after the ICD he wore short-circuited and failed to deliver a life-saving shock. The fatal incident prompted Guidant, the manufacturer of the flawed ICD, to recall four different device models they sold. In total 70,000 Guidant ICDs were recalled in one of the biggest regulatory actions of the past 25 years.34

Guidant came under intense public scrutiny when the patient’s physician Dr. Robert Hauser discovered that the company first observed the flaw that caused his patient’s device to malfunction in 2002, and even went so far as to implement manufacturing changes to correct it, but failed to disclose it to the public or health-care industry.

The body of research analyzed for this paper points to the same conclusion: security is not achieved through obscurity and closed source programs force users to forfeit their ability to evaluate and improve a system’s security. Though there is lingering debate over the degree to which end-users contribute to the maintenance of FOSS programs and how to ensure the quality of the patches submitted, most of the evidence supports our paper’s central assumption that auditable, peer-reviewed software is comparatively more secure than proprietary programs.

Programs have different standards to ensure the quality of the patches submitted to open source programs, but even the most open, transparent systems have established methods of quality control. Well-established open source software, such as the kind favored by the DoD and the other agencies mentioned above, cannot be infiltrated by “just anyone.” To protect the code base from potential adversaries and malicious patch submissions, large open source systems have a “trusted repository” that only certain, “trusted,” developers can directly modify. As an additional safeguard, the source code is publicly released, meaning not only are there more people policing it for defects, but more copies of each version of the software exist making it easier to compare new code.

IV The FDA Review Process and Legal Obstacles to Device Manufacturer Accountability

“Implanted medical devices have enriched and extended the lives of countless people, but device malfunctions and software glitches have become modern ‘diseases’ that will continue to occur. The failure of manufacturers and the FDA to provide the public with timely, critical information about device performance, malfunctions, and ’fixes’ enables potentially defective devices to reach unwary consumers.” — Capitol Hill Hearing Testimony of William H. Maisel, Director of Beth Israel Deaconess Medical Center, May 12, 2009.

The FDA’s Center for Devices and Radiological Health (CDRH) is responsible for regulating medical devices, but since the Medical Device Modernization Act (MDMA) was passed in 1997 the agency has increasingly ceded control over the pre-market evaluation and post-market surveillance of IMDs to their manufacturers.35 While the agency has been making strides towards the MDMA’s stated objective of streamlining the device approval process, the expedient regulatory process appears to have come at the expense of the CDRH’s broader mandate to “protect the public health in the fields of medical devices” by developing and implementing programs “intended to assure the safety, effectiveness, and proper labeling of medical devices.”36

Since the MDMA was passed, the FDA has largely deferred these responsibilities to the companies that sell these devices.37 The legislation effectively allowed the businesses selling critical devices to establish their own set of pre-market safety evaluation standards and determine the testing conducted during the review process. Manufacturers also maintain a large degree of discretion over post-market IMD surveillance. Though IMD-manufacturers are obligated to inform the FDA if they alert physicians to a product defect or if the device is recalled, they determine whether a particular defect constitutes a public safety risk.

“Manufacturers should use good judgment when developing their quality system and apply those sections of the QS regulation that are applicable to their specific products and operations,” reads section 21 of Quality Standards regulation outlined on the FDA’s website. “Operating within this flexibility, it is the responsibility of each manufacturer to establish requirements for each type or family of devices that will result in devices that are safe and effective, and to establish methods and procedures to design, produce, distribute, etc. devices that meet the quality system requirements. The responsibility for meeting these requirements and for having objective evidence of meeting these requirements may not be delegated even though the actual work may be delegated.”

By implementing guidelines such as these, the FDA has refocused regulation from developing and implementing programs in the field of medical devices that protect the public health to auditing manufacturers’ compliance with their own standards.

“To the FDA, you are the experts in your device and your quality programs,” Jeff Geisler wrote in a 2010 book chapter, Software for Medical Systems.38 “Writing down the procedures is necessary — it is assumed that you know best what the procedures should be — but it is essential that you comply with your written procedures.”

The elastic regulatory standards are a product of the 1976 amendment to the Food, Drug, and Cosmetics Act.39The Amendment established three different device classes and outlined broad pre-market requirements that each category of IMD must meet depending on the risk it poses to a patient. Class I devices, whose failure would have no adverse health consequences, are not subject to a pre-market approval process. Class III devices that “support or sustain human life, are of substantial importance in preventing impairment of human health, or which present a potential, unreasonable risk of illness or injury,” such as IMDs, are subject to the most stringent FDA review process, Premarket Approval (PMA).40

By the FDA’s own admission, the original legislation did not account for technological complexity of IMDs, but neither has subsequent regulation.41

In 2002, an amendment to the MDMA was passed that was intended to help the FDA “focus its limited inspection resources on higher-risk inspections and give medical device firms that operate in global markets an opportunity to more efficiently schedule multiple inspections,” the agency’s website reads.42

The legislation further eroded the CDRH’s control over pre-market approval data, along with the FDA’s capacity to respond to rapid changes in medical treatment and the introduction of increasingly complex devices for a broader range of diseases. The new provisions allowed manufacturers to pay certain FDA-accredited inspectors to conduct reviews during the PMA process in lieu of government regulators. It did not outline specific software review procedures for the agency to conduct or precise requirements that medical device manufacturers must meet before introducing a new product. “The regulation …provides some guidance [on how to ensure the reliability of medical device software],” Joe Bremner wrote of the FDA’s guidance on software validation.43 “Written in broad terms, it can apply to all medical device manufacturers. However, while it identifies problems to be solved or an end point to be achieved, it does not specify how to do so to meet the intent of the regulatory requirement.”

The death of 21-year-old Joshua Oukrop in 2005 due to the failure of a Guidant device has increased calls for regulatory reform at the FDA.44 In a paper published shortly after Oukrop’s death, his physician, Dr. Hauser concluded that the FDA’s post-market ICD device surveillance system is broken.45

After returning the failed Prizm 2 DR pacemaker to Guidant, Dr. Hauser learned that the company had known the model was prone to the same defect that killed Oukrop for at least three years. Since 2002, Guidant received 25 different reports of failures in the Prizm model, three of which required rescue defibrillation. Though the company was sufficiently concerned about the problem to make manufacturing changes, Guidant continued to sell earlier models and failed to make patients and physicians aware that the Prizm was prone to electronic defects. They claimed that disclosing the defect was inadvisable because the risk of infection during de-implantation surgery posed a greater threat to public safety than device failure. “Guidant’s statistical argument ignored the basic tenet that patients have a fundamental right to be fully informed when they are exposed to the risk of death no matter how low that risk may be perceived,” Dr. Hauser argued. “Furthermore, by withholding vital information, Guidant had in effect assumed the primary role of managing high-risk patients, a responsibility that belongs to physicians. The prognosis of our young, otherwise healthy patient for a long, productive life was favorable if sudden death could have been prevented.”

The FDA was also guilty of violating the principle of informed consent. In 2004, Guidant had reported two different adverse events to the FDA that described the same defect in the Prizm 2 DR models, but the agency also withheld the information from the public. “The present experience suggests that the FDA is currently unable to satisfy its legal responsibility to monitor the safety of market released medical devices like the Prizm 2 DR,” he wrote, referring to the device whose failure resulted in his patient’s death. “The explanation for the FDA’s inaction is unknown, but it may be that the agency was not prepared for the extraordinary upsurge in ICD technology and the extraordinary growth in the number of implantations that has occurred in the past five years.”

While the Guidant recalls prompted increased scrutiny on the FDA’s medical device review process, it remains difficult to gauge the precise process of regulating IMD software or the public health risk posed by source code bugs since neither doctors, nor IMD users, are permitted to access it. Nonetheless, the information that does exist suggests that the pre-market approval process alone is not a sufficient consumer safeguard since medical devices are less likely than drugs to have demonstrated clinical safety before they are marketed.46

An article published in the Journal of the American Medical Association (JAMA) studied the safety and effectiveness data in every PMA application the FDA reviewed from January 2000 to December 2007 and concluded that “premarket approval of cardiovascular devices by the FDA is often based on studies that lack strength and may be prone to bias.”47 Of the 78 high-risk device approvals analyzed in the paper, 51 were based on a single study.48

The JAMA study noted that the need to address the inadequacies of the FDA’s device regulation process has become particularly urgent since the Supreme Court changed the landscape of medical liability law with its ruling in Riegel v. Medtronic in February 2008. The Court held that the plaintiff Charles Riegel could not seek damages in state court from the manufacturer of a catheter that exploded in his leg during an angioplasty. “Riegel v. Medtronic means that FDA approval of a device preempts consumers from suing because of problems with the safety or effectiveness of the device, making this approval a vital consumer protection safeguard.”49

Since the FDA is a federal agency, its authority supersedes state law. Based on the concept of preemption, the Supreme Court held that damages actions permitted under state tort law could not be filed against device manufacturers deemed to be in compliance with the FDA, even in the event of gross negligence. The decision eroded one of the last legal recourses to protect consumers and hold IMD manufacturers accountable for catastrophic, failure of an IMD. Not only are the millions of people who rely on IMD’s for their most life-sustaining bodily functions more vulnerable to software malfunctions than ever before, but they have little choice but to trust its manufacturers.

“It is clear that medical device manufacturers have responsibilities that extend far beyond FDA approval and that many companies have failed to meet their obligations,” William H. Maisel said in recent congressional testimony on the Medical Device Reform bill.50 “Yet, the U.S. Supreme Court ruled in their February 2008 decision, Riegel v. Medtronic, that manufacturers could not be sued under state law by patients harmed by product defects from FDA-approved medical devices …. [C]onsumers are unable to seek compensation from manufacturers for their injuries, lost wages, or health expenses. Most importantly, the Riegel decision eliminates an important consumer safeguard — the threat of manufacturer liability — and will lead to less safe medical devices and an increased number of patient injuries.”

In light of our research and the existing legal and regulatory limitations that prevent IMD users from holding medical device manufacturers accountable for critical software vulnerabilities, auditable source code is critical to minimize the harm caused by inevitable medical device software bugs.

V Conclusion

The Supreme Court’s decision in favor of Medtronic in 2008, increasingly flexible regulation of medical device software on the part of the FDA, and a spike in the level and scope of IMD usage over the past decade suggest a software liability nightmare on the horizon. We urge the FDA to introduce more stringent, mandatory standards to protect IMD-wearers from the potential adverse consequences of software malfunctions discussed in this paper. Specifically, we call on the FDA to require manufacturers of life-critical IMDs to publish the source code of medical device software so the public and regulators can examine and evaluate it. At the very least, we urge the FDA to establish a repository of medical device software running on implanted IMDs in order to ensure continued access to source code in the event of a catastrophic failure, such as the bankruptcy of a device manufacturer. While we hope regulators will require that the software of all medical devices, regardless of risk, be auditable, it is particularly urgent that these standards be included in the pre-market approval process of Class III IMDs. We hope this paper will also prompt IMD users, their physicians, and the public to demand greater transparency in the field of medical device software.

Notes

1Though software defects are never explicitly mentioned as the “Reason for Recall” in the alerts posted on the FDA’s website, the descriptions of device failures match those associated with souce-code errors. List of Device Recalls, U.S. Food & Drug Admin., http://www.fda.gov/MedicalDevices/Safety/RecallsCorrectionsRemovals/ListofRecalls/default.htm (last visited Jul. 19, 2010).

2Medtronic recalled its Lifepak 15 Monitor/Defibrillator in March 4, 2010 due to failures that were almost certainly caused by software defects that caused the device to unexpectedly shut-down and regain power on its own. The company admitted in a press release that it first learned that the recalled model was prone to defects eight years earlier and had submitted one “adverse event” report to the FDA. Medtronic, Physio-Control Field Correction to LIFEPAK 20/20e Defibrillator/ Monitors, BusinessWire (Jul. 02, 2010, 9:00 AM), http://www.businesswire.com/news/home/20100702005034/en/Physio-Control-Field-Correction-LIFEPAK%C2%AE-2020e-Defibrillator-Monitors.html

20/20e Defibrillator/ Monitors, BusinessWire (Jul. 02, 2010, 9:00 AM), http://www.businesswire.com/news/home/20100702005034/en/Physio-Control-Field-Correction-LIFEPAK%C2%AE-2020e-Defibrillator-Monitors.html

3Quality Systems Regulation, U.S. Food & Drug Admin., http://www.fda.gov/MedicalDevices/default.htm (follow “Device Advice: Device Regulation and Guidance” hyperlink; then follow “Postmarket Requirements (Devices)” hyperlink; then follow “Quality Systems Regulation” hyperlink) (last visited Jul. 2010)

4Riegel v. Medtronic, Inc., 552 U.S. 312 (2008).

5The Software Freedom Law Center (SFLC) prefers the term Free and Open Source Software (FOSS) to describe software that can be freely viewed, used, and modified by anyone. In this paper, we sometimes use mixed terminology, including the term “open source” to maintain consistency with the articles cited.

6See Josie Garthwaite, Hacking the Car: Cyber Security Risks Hit the Road, Earth2Tech (Mar. 19, 2010, 12:00 AM), http://earth2tech.com.

7Sanket S. Dhruva et al., Strength of Study Evidence Examined by the FDA in Premarket Approval of Cardiovascular Devices, 302 J. Am. Med. Ass’n 2679 (2009).

8Freedonia Group, Cardiac Implants, Rep. Buyer, Sept. 2008, available at

http://www.reportbuyer.com/pharma_healthcare/medical_devices/cardiac_implants.html.

9Id.

10Robert G. Hauser & Linda Kallinen, Deaths Associated With Implantable Cardioverter Defibrillator Failure and Deactivation Reported in the United States Food and Drug Administration Manufacturer and User Facility Device Experience Database, 1 HeartRhythm 399, available at http://www.heartrhythmjournal.com/article/S1547-5271%2804%2900286-3/.

11Charles Graeber, Profile of Kevin Fu, 33, TR35 2009 Innovator, Tech. Rev.,

http://www.technologyreview.com/TR35/Profile.aspx?trid=760 (last visited Jul. 9, 2010).

12See Tamara Denning, et al., Absence Makes the Heart Grow Fonder: New Directions for Implantable Medical Device Security, Proceedings of the 3rd Conference on Hot Topics in Security(2008), available at

http://www.cs.washington.edu/homes/yoshi/papers/HotSec2008/cloaker-hotsec08.pdf.

13Kevin Poulsen, Hackers Assault Epilepsy Patients via Computer, Wired News (Mar. 28, 2008),

http://www.wired.com/politics/security/news/2008/03/epilepsy.

14Id.

15Dolores R. Wallace & D. Richard Kuhn, Failure Modes in Medical Device Software: An Analysis of 15 Years of Recall Data, 8 Int’l J. Reliability Quality Safety Eng’g 351 (2001), available at http://csrc.nist.gov/groups/SNS/acts/documents/final-rqse.pdf.

Tuesday, January 31. 2012

Build Up Your Phone’s Defenses Against Hackers

-----

Chuck Bokath would be terrifying if he were not such a nice guy. A jovial senior engineer at the Georgia Tech Research Institute in Atlanta, Mr. Bokath can hack into your cellphone just by dialing the number. He can remotely listen to your calls, read your text messages, snap pictures with your phone’s camera and track your movements around town — not to mention access the password to your online bank account.

And while Mr. Bokath’s job is to expose security flaws in wireless devices, he said it was “trivial” to hack into a cellphone. Indeed, the instructions on how to do it are available online (the link most certainly will not be provided here). “It’s actually quite frightening,” said Mr. Bokath. “Most people have no idea how vulnerable they are when they use their cellphones.”

Technology experts expect breached, infiltrated or otherwise compromised cellphones to be the scourge of 2012. The smartphone security company Lookout Inc. estimates that more than a million phones worldwide have already been affected. But there are ways to reduce the likelihood of getting hacked — whether by a jealous ex or Russian crime syndicate — or at least minimize the damage should you fall prey.